Function composition is the process of applying a function to the

output of another function. In algebra, given two functions,

f and g,

( f ° g )( x ) = f( g( x )). The circle symbol is the

composition operator commonly pronounced “composed with” or “after”. One

can say “f is composed of g” or “f after g” because g is

evaluated first and its output is passed as an argument to

f.

Every time you write code like the following, you’re composing functions:

const g = n => n + 1;

const f = n => n * 2;

const doStuff = x => {

const afterG = g( x );

const afterF = f( afterG );

return afterF;

};

doStuff( 20 ); // 42Every time you write a Promise chain, you’re composing

functions:

const g = n => n + 1;

const f = n => n * 2;

const wait = time => new Promise(

( resolve, reject ) => setTimeout(

resolve,

time

)

);

wait( 300 )

.then(() => 20 )

.then( g )

.then( f )

.then( value => console.log( value )) //42

;Every time you chain array method calls, lodash methods, observables (RxJS, etc…) you’re composing functions. If you’re chaining, you’re composing. If you’re passing return values into other functions, you’re composing. If you call two methods in a sequence, you’re composing using this as input data.

If you’re chaining, you’re composing.

When you compose functions intentionally, you’ll do it better.

Composing functions intentionally, we can improve our

doStuff() function to a simple one-liner:

const g = n => n + 1;

const f = n => n * 2;

const doStuffBetter = x => f( g( x ));

doStuffBetter( 20 ); // 42A common objection to this form is that it’s harder to debug. For example, how would we write this using function composition?

const doStuff = x => {

const afterG = g( x );

console.log( `after g: ${ afterG }` );

const afterF = f( afterG );

console.log( `after f: ${ afterF }` );

return afterF;

};

dostuff( 20 );

/*

"after g: 21"

"after f: 42:

*/Firstly, let’s abstract that “after f” and “after g” logging into a

little utility called trace():

const trace = label => value => {

console.log( `${ label }: ${ value }` );

return value;

};Now we can use it like this:

const doStuff = x => {

const afterG = g( x );

trace( "after g" )( afterG );

const afterF = f( afterG );

trace( "after f" )( afterF );

return afterF;

};

doStuff( 20 );

/*

"after g : 21"

"after f : 42"

*/Popular functional programming libraries like Lodash and Ramda include utilities to make functional composition easier. You can rewrite the above function like this:

import pipe from "lodash/fp/flow";

const doStuffBetter = pipe(

g,

trace( "after g" ),

f,

trace( "after f" )

);

doStuffBetter( 20 );

/*

"after g: 21"

"after f: 42:

*/If you want to try this code without importing anything, you can define pipe like this:

// pipe( ...fns: [ ...Function ]) => x => y

const pipe( ...fns ) => x => fns.reduce(( y, f ) => f( y ), x );Don’t worry if you’re not following how that works, yet. Later on we’ll explore function composition in a lot more detail. In fact, it’s so essential, you’ll see it defined and demonstrated many times throughout this text. The point is to help you become so familiar with it that its definition and usage becomes automatic.

pipe() creates a pipeline of functions, passing the

output of one function to the input of another. When you use

pipe() (and its twin, compose()) you don’t

need intermediary variables. Writing functions without mention of the

arguments is called point-free style. To do it, you’ll

call a function that returns the new function, rather than declaring the

function explicitly. That means you won’t need the function

keyword or the arrow syntax.

Point-free style can be taken too far, but a little bit here and there is great because those intermediary variables add unnecessary complexity to your functions.

There are several benefits to reduced complexity:

The average human brain has only a few shared resources for discrete quanta in working memory, and each variable potentially consumes one of those quanta. As you add more variables, our ability to accurately recall the meaning of each variable is diminished. Working memory models typically involve 4-7 discrete quanta. Above those numbers, error rates dramatically increase.

Using the pipe form, we eliminated 3 variables — freeing up almost half of our available working memory for other things. That reduces our cognitive load significantly. Software developers tend to be better at chunking data into working memory than the average person, but not so much more as to weaken the importance of conservation.

Concise code also improves the signal-to-noise ratio of your code. It’s like listening to a radio — when the radio is not tuned properly to the station, you get a lot of static and interference, and it’s harder to hear the music. When you tune it to the correct station, the noise goes away, and you get a stronger musical signal.

Code is the same way. More concise code expression leads to enhanced comprehension.

These are primitives:

const firstName = "Claude";

const lastName = "Debussy";And this is a composite:

const fullName = {

firstName,

lastName

};Likewise, all Arrays, Sets,

Maps, WeakMaps, TypedArrays etc…

are composite datatypes. Any time you build any non-primitive data

structure, you’re preforming some kind of object composition. Class

inheritance can be used to construct composite objects, but it’s a

restrictive and brittle way to do it.

We’ll use a more general definition of object composition from “Categorical Methods in Computer Science: With Aspects from Topology” (1989):

“Composite objects are formed by putting objects together such that each of the latter is ‘part of’ the former.”

Class inheritance is just one kind of composite object construction. All classes produce composite objects, but not all composite objects are produced by classes or class inheritance. Favouring object inheritance over class-based inheritance means composing objects from small component parts rather than inheriting all properties from an ancestor in a class hierarchy. The latter causes a large variety of well-known problems in object oriented design:

The most common form of object composition is known as mixin composition. It works like ice-cream. You start with an object (like vanilla ice-cream), and then mix in the features you want. Add some nuts, caramel, chocolate swirl, and you wind up with nutty caramel chocolate swirl ice cream.

Building composites with class inheritance:

class Foo{

constuctor(){

this.a = "a";

}

}

class Bar extends Foo{

constructor( options ){

super( options );

this.b = "b";

}

}

const myBar = new Bar(); // { a : "a", b : "b" }Building composites with mixin composition:

const a = { a : "a" };

const b = { b : "b" };

const c = { ...a, ...b }; // { a : "a", b : "b" }We’ll explore other styles of object composition in more depth later. For now, your understanding should be:

This isn’t about functional programming (FP) vs object-oriented programming (OOP), or one language vs another. Components can take the form of functions, data structures, classes, etc… Different programming languages tend to afford different atomic elements for components. Java affords classes, Haskel affords functions, etc… But no matter what language and what paradigm you favour, you can’t get away from composing functions and data structures. In the end, that’s what it all boils down to.

No matter how you write software, you should compose it well. The essence of software development is composition.

In the beginning, before most of computer science was actually done on computers, there were two great computer scientists: Alonzo Church and Alan Turing. They produced two different but equivalent universal models of computation.

Alonzo Church invented lambda calculus which is a universal model based on function application. Alan Turing is known for the turing machine. This is a model of computation that defines a theoretical device that manipulates symbols on a strip of tape.

Together they collaborated to demonstrate that the two models were functionally equivalent.

Lambda calculus is all about function composition. Let’s discuss the importance of composition in software design. There are three important points that make lambda calculus special:

const sum = ( x, y ) => x + y is the

anonymous function expression

( x, y ) => x + y.( x, y ) => x + y can be

expressed as a unary function like: x => y => x + y.

This transformation is known as currying.Together, these features form a simple, yet expressive vocabulary for composing software using functions as the primary building block. In javascript, anonymous and curried functions are optional features. While javascript supports important features of lambda calculus, it does not enforce them.

The classic function composition takes the output from one function

and uses it as the input for another function. For example, the

composition f . g can be written as

compose2 = f => g => x => f( g( x )). Here’s how

you’d use it:

double = n => n * 2;

inc = n => n + 1;

compose2( double )( inc )( 3 );The compose2() function takes the double

function as the first argument, the inc function as the

second, and then applies the composition of those two functions to the

argument 3. Looking at the signature of

compose2() again, f is double(),

g is inc() and x is

3. The function call,

compose2( double )( inc )( 3 ), is actually 3 different

function invocations:

double and returns a new

function.inc and returns a new

function.3 and evaluates

f( g( x )), which is now

double( inc (3 )).x evaluates to 3 and gets passes into

inc().inc( 3 ) evaluates to 4.double( 4 ) evaluates to 8.8 gets returned from the function.When software is composed, it can be represented by a graph of function compositions. Consider the following:

append = s1 => s2 => s1 + s2;

append( "Hello, " )( "world!" );You could represent it visually :

___append___

/ \

s1 s2

/ \

"Hello, " "world!"

\ /

\ /

"Hello, world!"Lambda calculus was hugely influential on software design and prior to about 1980 many very influential icons of computer science were building software using its methods. Lisp was created in 1958 based on ideas of lambda calculus. Today, Lisp is the second-oldest language that’s still in popular use. Lisp is a popular teaching language in computer science curriculum for three reasons:

Somewhere between 1970 and 1980, the way that software was created

drifted away from simple compositions, and became a list of linear

instructions for the computer to follow. Then came object-oriented

programming — a great idea about component encapsulation and message

passing that got distorted by popular languages into a horrible idea

about inheritance hierarchies and is-a relationships for

feature reuse.

Functional programming was relegated to the sidelines and academia: The blissful obsession of the geekiest of programming geeks, professors in their ivy league towers, and some lucky students who escaped the Java force-feeding obsession of the 1990’s — 2010’s.

Around 2010 javascript exploded and javascript had important features of lambda calculus. People began to explore a thing called “functional programming”. By 2015, the idea of building software with function composition was popular again. The underlying foundation of javascript (ecmascript) gained new features and added arrow functions. This feature allowed programmers the ability to read and write functions, currying and lambda expressions in a simpler manner.

Composition is a simple, elegant and expressive way to clearly model the behavior of software. The process of composing small, deterministic functions produces software that is easier to organize, understand, debug, extend, test and maintain.

The long story cut short; we are going to use javascript to learn functional programming and composition. We will treat you the programmer, seasoned or beginner, as one and the same starting from the very beginning. Set aside preconceptions and dive in.

Instead of using languages that are more commonly associated with function paradigms, like Haskell, ClojureScript or Elm, we will use javascript. Why? Javascript has the most important features needed for functional programming:

x => x * 2 is a valid function expression in

javascript. Concise lambdas make it easier to work with

higher-order functions.add( 1 )( 2 ), 1 is a fixed

argument in the function returned by add2( 1 ).Javascript is a multi-paradigm language. Other styles supported include procedural (imperitive) programming like C, object-oriented programming and of course function programming. The disadvantage of this approach is that imperative and object-oriented styles tend to imply that almost everything needs to be mutable. Mutation is a change to data structure that happens in-place. For example:

const foo = { bar : "baz" };

foo.bar = "qux"; // mutation - changing the property Objects need to be mutable so that their properties can be updated by methods. Here are some features that some functional languages have that javascript does not:

for, while or do loops.In javascript, purity must be achieved by convention. If you’re not building most of your application by composing pure functions, you’re not programming using the functional style. It’s unfortunately easy in javascript to get off track by accidentally creating and using impure functions.

In pure functional languages, immutability is often enforced. Javascript lacks efficient, immutable trie-based data structures used by most functional languages. There are, however, libraries that help such as Immutable.js and Mori.

ES6 introduced the const keyword that

once invoked cannot be reassigned afterwards to refer to a different

value after. It’s important to understand that const does

not represent an immutable value.

A const object can’t be reassigned to refer to a

completely different object but object properties can be mutated after

invocation. Javascript also has the ability to

freeze() objects but are only frozen at the root level.

This means that a nested object can still have properties of its

properties mutated.

Whilst javascript does feature recursion it does not have a fully developed feature called tail call optimisation. This feature allows functions to reuse stack frames for recursive calls. Without this feature any large recursive iteration can have it’s call stack grow without bounds and cause a stack overflow (not just a website name). Ultimately it isn’t safe to use recursion in javascript for large iterations until tail call optimisation is implemented by all major browser vendors.

Side-effects and mutation may be perceived as a flaw in javascript but it’s nigh-on impossible to create a meaningful application without side-effects. Pure functional languages like Haskel mask side-effects using boxes called monads allowing the program to remain pure even though the side-effects represented by the monads are impure.

The largest hurdle to monads is simply explaining what such a thing is and is often difficult to convey the whys and wherefores.

“A monad is a monoid in the category of endofunctors, what’s the problem?” ~ James Iry, fictionally quoting Philip Wadler, paraphrasing a real quote by Saunders Mac Lane. “A Brief, Incomplete, and Mostly Wrong History of Programming Languages”

As with most things in functional programming the impenetrable academic vocabulary is much harder to understand than the concepts.

The rest of this section is just waffle…

A basic introduction to functional programming with javascript. The best way to use these examples is to follow along using the environment of your choice.

An expression is a chunk of code that evaluates to a value. The following are all valid expressions in javascript:

7 + 1; // 8

7 * 2; // 14

"hello"; // HelloThe value of an expression can be given a name. When you do so, the

expression is evaluated first and the resulting value is assigned to the

name. You can assign the value to var, let or

const.

const hello = "hello";

hello; // "hello"var, let and

constJavascript supports three types of variables:

var, let and const. They can be

thought of in terms of order of selection. By default, one selects the

strictest declaration: const. A variable assigned to a

const cannot be reassigned. The final value must be

assigned at declaration time.

Sometimes it is useful to reassign variables. You may have a looping

structure that needs to increment a variable during each loop. Enter the

let variable. This type of variable is scoped to the code

block (between curly braces) and can be modified.

var tells you the least about the variable as it is the

weakest signal. This text will use const in order to get

you in to the habit of defaulting to const for actual

programs.

Javascript has several types including the two we’ve

seen thus far, numbers and strings, as well as

booleans, arrays, objects and

more.

An array is an ordered list of values. It is a container

that can hold many items or varying types and unlike other languages

javascript doesn’t require you to specify the

size/length of the array at declaration.

[ 1, 2, 3 ]; // array literal notation

const arr = [ 1, 2, 3 ]; // assigned to const named arrAn object in javascript is a collection of

key: value pairs.

{ key : "value" }; // literal notation

const foo = { bar : "bar" }; // assigned to const named fooIf you want to assign existing variables to object property keys of the same name there’s a shortcut for that. You can just type the variable name instead of providing both a key and a value:

const a = "a";

const oldA = { a : a }; // long, redundant way

const oA = { a }; // short and sweet!

/* Just for posterity, let's do that again */

const b = "b";

const oB = { b };Objects can be easily composed together into new

objects:

const c = { ...oA, ...oB }; // { a : "a", b : "b" }Those three dots are the object spread operator. It iterates

over the properties in oA and assigns them to the new

object then does the same for oB. Any keys that already

exist in on the new object will be overwritten. The object spread

operator is quite well supported at this time but in the event that you

must support a browser that doesn’t utilise it you can substitute with

Object.assign():

const d = Object.assign({}, oA, oB ); // { a : "a", b : "b" }The first argument to Object.assign() is the destination

of the assignment. In the example above that will be an

object. When leaving out the destination argument, the

first object passed will be mutated with the subsequent arguments. Bear

this in mind.

Both objects and arrays support

destructuring allowing you to extract values from them and assign to

named variables:

const [ t, u ] = [ "a", "b" ];

t; // "a"

u; // "b"

const blep = { blop : "blop" };

// the following is equivalent to:

// const blop = blep.blop;

const { blop } = blep;

blop; // "blop"As with the array example above, you can destructure to

multiple assignments at once. Here’s a line you’ll see in many

Redux projects: const { type, payload } = action;

This is how the proceeding line is used the context of a reducer

(more on this later):

const myReducer = ( state = {}, action = {}) => {

const ( type, payload ) = action;

switch( type ){

case "FOO" : return Object.assign({}, state, payload );

default : return state;

}

};If you don’t want to use a different name for the new binding you can assign a new name:

const { blop : bloop } = blep;

bloop; // "blop"

// read: assign blep.blop as bloopEd. The above line is a touch confusing. Why not

simply use bloop as the const name? Hopefully

this usage will become more clear later on.

There are two ways to compare two values: using the “double equals”

operator, ==, or the strict equality operator

===. The former uses type coercion behind the scenes to

allow you to compare values of different type

e.g. true == 1. The strict equality operator, or “triple

equals”, must be used between values of the same type

e.g. true === true.

Other comparison operators include:

> Greater than< Less than>= Greater than or equal to<= Less than or equal to!= Not equal!== Not strict equal&& Logical and|| Logical orA ternary expression is a boolean expression that evaluates to true

or false. Only one branch of the ternary will be executed based on the

question asked (using a compactor):

14 - 7 === 7 ? "Yep!" : "Nope."; // Yep!.

Javascript has function expressions that can be

assigned to names: const doubles = x => x * 2;. This

means the same thing as the mathematical function

f( x ) = 2x. Spoken aloud, the function reads as

f of x equals 2x To use the

function one simply calls it with a value like so: f( 2 )

which will evaluate to 4.

In other words, f( 2 ) = 4. You can think of a math

function as a mapping from inputs to outputs. f( x ) in

this case is a mapping of input values for x to

corresponding output values equal to the product of the input value and

2. In javascript the value of a function

expression is the function itself:

double; // [Function: double ]. You can see the function

definition using the .toString() method:

double.toString(); // "x => x * 2".

Functions have signatures which consist of:

Type signatures do not need to be specified in javascript and are figured out by the javascript engine at runtime. Javascript lacks its own function signature notation so there are a few competing standards. JSDoc has been very popular historically but it’s awkwardly verbose and few manage to keep the comments up-to-date along with the code.TypeScript and Flow are currently big contenders and then theres RType for documentation purposes only. Perhaps this is a lacking field chiefly because good documentation is hard whilst being a largely boring task. The two go hand in hand resulting in developers not going hand in hand with documentation.

The signature for doubles is:

double( x : n ) => n.

In spite of the fact that javascript doesn’t require signatures to be annotated, knowing what signatures are and what they mean will still be important in order to communicate efficiently about how functions are used and how functions are composed. Most reusable function composition utilities require you to pass functions which share the same type signature.

Javascript supports default parameters values. The

following function works like an identity function (a function which

returns the same value you pass in) unless you call it with

undefined or simply pass no argument at all:

const orZero = ( n = 0 ) => n.

To set a default one simply assigns it to the parameter with the

= operator in the function signature. When assigned in this

fashion, type inference tools such as Tern.js,

Flow or TypeScript can infer the type

signature of your function automatically even if you don’t explicitly

declare type annotations.

The result is that, with the right plugins installed in your editor or IDE, you’ll be able to see function signatures displayed inline as you’re typing function calls. You’ll also be able to understand how to use a function at a glance based on its call signature. Using default assignments wherever it makes sense can help you write more self-documenting code.

Note: Parameters with defaults don’t count toward the functions

.lengthproperty which will throw off utilities such as autocurry which depend on the.lengthvalue. Some curry utilities (such as lodash/curry) allow you to pass a custom arity to work around this limitation if you bump into it.

Javascript functions can take object literals as arguments and use destructuring assignment in the parameter signature in order to achieve the equivalent of named arguments. Notice, you can also assign default values to parameters using the default parameters feature:

const createUser = ({

name = "Anonymous",

avatarThumbnail = "/avatars/anonymous.png"

}) => ({

name,

avatarThumbnail

});

const george = createUser({

name : "George",

avatarThumbnail : "avatars/shades-emoji.png"

});

george;

/*

{

name : "George",

avatarThumbnail : "avatars/shades-emoji.png"

}

*/A common feature of functions in javascript is the

ability to gather together a group of remaining arguments in the

functions signature using the rest operator: .... The

following function simply discards the first argument and returns the

rest as an array:

const aTail = ( head, ...tail ) => tail;

aTail( 1, 2, 3 ); // [ 2, 3 ]Rest gathers individual elements together into an

array. Spread does the opposite: it spreads

the elements from an array to individual elements. Consider

this:

const shiftToLast = ( head, ...tail ) => [ ...tail, head ];

shiftToLast( 1, 2, 3 ); // [ 2, 3, 1 ]Arrays in javascript have an iterator

that gets invoked when the spread operator is used. For each item in the

array, the iterator delivers a value. In the expression,

[ ...tail, head ], the iterator copies each element in

order from the tail array into the new

array created by the surrounding literal notation. Since

the head is already an individual element, we just plop it

onto the end of the array and we’re done.

Curry and partial application can be enabled by returning another function:

const highpass = cutoff => n => n >= cutoff;

const gt4 = highpass( 4 ); // highpass() returns a new function

// using the function keyword rather than arrow functions

const highpass = function highpass( cutoff ){

return function( n ){

return n >= cutoff;

};

};The arrow in javascript roughly means “function”.

There are some important differences in function behaviour depending on

which kind of function you use (=> lacks its own

this, and can’t be used as a constructor). When you see

x => x think a “a function that take x and

returns x. So you can read

const highpass = cutoff => n => N >= cutoff;

as:

“highpass is a function which takes cutoff

and returns a function which takes n and returns the result

of n >= cutoff”.

Since highpass() returns a function you can use it to

create a more specialized function:

const gt4 = highpass( 4 );

gt4( 6 ); // true

gt4( 3 ); // falseAutocurry lets you curry functions automatically for maximal

flexibility. Presume you have a function add3():

const add3 = curry(( a, b, c ) => a + b + c );. With

autocurry you can use it in several different ways and it will

return the right thing depending on how many arguments you pass in:

add3( 1, 2, 3 ); // 6

add3( 1, 2 )( 3 ); // 6

add3( 1 )( 2, 3 ); // 6

add3( 1 )( 2 )( 3 ); // 6Whilst javascript lacks a built-in

autocurry mechanism you can import one from

Lodash: $ yarn add lodash. This will be

ready for use with the following command:

import curry form "lodash/curry";. Should you prefer to

write your own autocurry function try this on for size:

const curry = (

f, arr = []

) => ( ...args ) => (

a => a.length === f.length ?

f( ...a ) :

curry( f, a )

)([ ...arr, ...args ]);In mathematical notation functional composition is:

f . g. This is the process of passing a return value on one

function to another as an argument. The javascript

equivalent is: f( g( x )). It is evaluated from the inside

out:

x is evaluated.g() is applied to x.f() is applied to the return value of

g( x ).For example:

const inc = n => n + 1;

inc( double ( 2 )); // 5The value 2 is applied to double() and the

result, or return value, is applied to inc(). The last

function that runs will be inc( 4 ) // 5. Any expression

can be passed as an argument to a function and will be evaluated before

being applied to the function:

inc( double( 2 ) * double( 2 )); // 17. In this example the

two double() functions are evaluated returning

4 * 4 that is then applied to inc().

Function composition is central to functional programming and shall explore this topic in greater detail later on.

Arrays have some built-in methods. A method is a

function associated with an object and is usually a property of the

associated object:

const arr = [ 1, 2, 3 ];

arr.map( double ); // [ 2, 4, 6 ];In this case, arr is the object,

.map() is a property of the object with a function for a

value. When invoked, the function gets applied to the arguments as well

as a special parameter called this which gets automatically

set when the method is invoked. Note that we’re passing the

double function as a value into map rather

than calling it. That’s because map takes a function as an

argument and applies it to each item in the array. It

returns a new array containing the values returned by

double(). In creating and returning a new

array, the original arr is left untouched and

not mutated.

Method chaining is the process of directly calling a method on the return value of a function without needing to refer to the return value by name:

const arr = [ 1, 2, 3 ];

arr.map( double ).map( double ); // [ 4, 8, 12 ]A predicate is the function that returns a

boolean value. The .filter() method takes a

predicate and returns a new list selecting only the items that pass the

predicate to be included in the new list:

[ 2, 4, 6 ].filter( gt4 ); // [ 4, 6 ]. Frequently you’ll

want to filter a list then map those items to a new list:

[ 2, 4, 6 ].filter( gt4 ).map( double ); // [ 8, 12 ].

…and that’s our overview of functional javascript. A bit of a whirlwind and not exhaustive by any means. Later on we’ll develop these ideas in examples. Onwards…

A higher order function is a function that takes a function

as an argument or returns a function. Higher order function is in

contrast to first order functions which don’t take a function as an

argument or return a function as output. Earlier we saw examples of

.map() and .filter(). Both of them take a

function as an argument meaning, yes, they are both higher order

functions. Let’s look at an example of a first-order function that

filters all the 4-letter words from a list of words:

const censor = words => {

const filtered = [];

for( let i = 0, { length } = words, i < length; i++ ){

const word = words[ i ];

if( word.length !== 4 ) filtered.push( word );

}

return filtered;

};

censor([ "oops", "gasp", "shout", "sun" ]);

// [ "shout", "sun" ]Now should we want to select all the words that begin with “s”? We could create another function:

const startsWithS = words => {

const filtered = [];

for( let i = 0, { length } = words; i < length; i++ ){

const word = words[ i ];

if( word[ 0 ] == "s" || word[ 0 ] == "S" ) filtered.push( word );

}

return filtered;

}

startsWithS([ "oops", "gasp", "shout", "sun" ]);

// [ "shout", "sun" ]In just two functions we’ve begun to repeat ourselves. The two functions are very specific and aren’t very portable. We can abstract the iteration and filtering to build all sorts of similar functions. Luckily for us, javascript has first class functions. What does that mean?

In essence, we can use functions just like any other bits of data in our programs making abstraction a lot easier. For instance, we can create a function that abstracts the process of iterating over a list an accumulating a return value by passing in a function that handles the bits that are different. We’ll call that function the reducer:

const reduce = ( reducer, initial, arr ) => {

// shared stuff

let acc = initial;

for( let i = 0, length = arr.length; i < length; i++ ){

// unique stuff in reducer() call

acc = reducer( acc, arr[ i ]);

// more shared stuff

}

return acc;

};

reduce(( acc, curr ) => acc + curr, 0, [ 1, 2, 3 ]); // 6This reduce() implementation takes a reducer function,

an initial value for the accumulator and an array of data

to iterate over. For each item in the array the reducer is

called passing it the accumulator and the current array

element. The return value is assigned to the accumulator. When it’s

finished applying the reducer to all of the values in the list the

accumulated value is returned.

In the usage example, we call reduce and pass to it a function,

( acc, curr ) => acc + curr, which takes the accumulator

and the current value in the list and returns a new accumulated value.

Next we pass an initial value, 0 and finally the data to

iterate over. With the iteration and value accumulation abstracted we

can now implement a more generalized filter() function:

const filter = (

fn, arr

) => reduce(( acc, curr ) => fn( curr ) ?

acc.concat([ curr ]) :

acc, [], arr

);In the filter() function everything is shared except the

fn() argument that gets passed. That fn()

argument is called a predicate meaning that it will evaluate to

either true or false. We call

fn() with the current value and if the

fn( curr ) call return true we then concat the

curr value to the accumulator array. Otherwise

we just return the current accumulator value. Noe we can implement

censor() with filter() to filter out 4-letter

words:

const censor = words => filter(

word => word.length !== 4,

words

);Look how much smaller it is! And so is

startsWithS():

const startsWithS - words => filter(

word => word.startsWith( "s" ),

words

);Hold your horse. These reduce() and

filter() functions look awfully familiar. Indeed they are

methods of Array and are rather handy.

Higher order functions are also commonly used to abstract how to

operate on different data types. For instance, .filter()

doesn’t have to operate on arrays of strings.

It could just as easily filter numbers because you can pass

in a function that knows how to deal with a different data type.

Remember highpass()?

cosnt highpass = cutoff => n => n >= cuttoff;

const gt3 = highpass( 3 );

[ 1, 2, 3, 4 ].filter( gt3 ); // [ 3, 4 ]You can use higher order functions to make a function polymorphic. As you can see, higher order functions can be a whole lot more reusable and versatile than their first order cousins. Generally speaking you’ll use higher order functions in combination with very simple first order functions in your real application code.

Reduce (aka: fold, accumulate) utility commonly used in functional programming that lets you iterate over a list applying a function to an accumulated value and the next item in the list until the iteration is complete and the accumulated value gets returned. Many useful things can be implemented with reduce. Frequently it’s the most elegant way to do any non-trivial processing on a collection of items.

Reduce takes a reducer function and an initial value and then returns

the accumulated value. For Array.prototype.reduce() the

initial list is provided by this so it’s not one of the

arguments:

array.reduce(

reducer : ( accumulator: Any, current : Any ) => Any,

initialValue : Any

) => accumulator : AnySum of an array:

[ 2, 4, 6 ].reduce(( acc, n ) => acc + n, 0 ); // 12.

For each element in the array the reducer is called and

passed the accumulator and the current value. The reducer’s job is to

“fold” the current value into the accumulated value somehow. How is not

specified and specifying how is the purpose of the reducer function.The

reducer returns the new accumulated value and recue() moves

on to the next value in the array. The reducer may need an

initial value to start with so most implementations take an initial

value as a parameter.

In the case of this summing reducer the first time the reducer is

called, acc starts at 0 (the value we passed

to .reduce() as the second parameter). The reducer return

0 + 2 with 2 being the first element in the

array. The next call will then return 2 + 4

using the accumulator and next element respectively. This will continue

until there are no more elements and the final accumulation is returned.

The example above uses an anonymous function to serve as the reducer but

we can abstract it and give it a name:

const summingReducer = ( acc, n ) => acc + n;

[ 2, 4, 6 ].reduce( summingReducer, 0 ); // 12Normally, reduce() works from left to right. In

javascript we also have [].reduceRight()

which works in the opposite direction. Of course the previous example

would result in the same total but the first value added to the

accumulator would be 6 rather than 2.

It’s easy to define map(), filter(),

forEach() and lots of other interesting things using

reduce:

const map = ( fn, arr ) => arr.reduce(( acc, item, index, arr ) => {

return acc.concat( fn( item, index, acc ));

}, []);For map, the accumulated value is an array with any element that

satisfies the function passed in (fn );

const filter = ( fn, arr ) => arr.reduce(( newArr, item ) => {

return fn( item ) ? newArr.concat([ item ]) : newArr;

}, []);Filter works in a similar manner as map except that we take a

predicate function and conditionally append the current value

to the new array if the element evaluates to cierto.

For each of the above examples you have a list of data, iterate over that data applying some function and folding the results into an accumulated value. Lots of applications spring to mind. But what if your data is a list of functions?

Reduce is also a convenient way to compose functions. Remember

function composition: if you want to apply the function f

to the result of g of x i.e. the composition,

f . g, you could use the following

javascript: f( g( x ));

Reduce lets us abstract that process to work on any number of

functions so you could easily define a function that would represent:

f( g( h( x ))).

To make that happen we’ll need to run reduce in reverse. That is,

right-to-left. Thankfully javascript provides the means

to do that:

const compose = ( ...fns ) => x => fns.reduceRight(( v, f ) => f( v ), x );.

Note: If javascript had not provided

[].reduceRight()you could still implementreduceRight()– usingreduce(). I’ll leave it to adventurous readers to figure it out how.

compose() is great if you want to represent the

composition form the inside-out – that is, in the math notional sense.

But what if you want to think of it as a sequence of events? Imagine we

want to add 1 to a number and then double it. With

compose() that would be:

const add1 = n => n + 1;

const double = n => n * 2;

const add1ThenDouble = compose(

double,

add1

);

add1ThenDouble( 2 ); // 6

// (( 2 + 1 = 3 ) * 2 = 6 )See the problem? The first step is listed last so in order to

understand the sequence you’ll need to start at the bottom of the list

and work your way backwards to the top. Or we can reduce left-to-right

as you normally would instead of right-to-left:

const pipe = ( ...fns ) => x => fns.reduce(( v, f ) => f( v ), x );.

Now you can write add1ThenDouble() like this:

const add1ThenDouble = pipe(

add1,

double

);

add1ThenDouble( 2 ); // 6

// (( 2 + 1 = 3 ) * 2 = 6 )We’ll go into more detail on compose() and

pipe() later. What you should understand right now is that

reduce() is a very powerful tool and you really need to

learn it. Just be aware that if you get very tricky with reduce some

people have a hard time following along.

A functor data type is something you can map over.

It’s a container which has an interface which can be used to apply a

function to the values inside it. When you see a functor you should

think “mappable”. Functor types are typically represented as an

object with a .map() method that maps from inputs to

outputs while preserving structure. In practice, “preserving structure”

means that the return value is the same type of functor (though values

inside the container may be a different type).

A functor supplies a box with zero or more things inside and a

mapping interface. An array is a good example of a functor

but many other kinds of objects can be mapped over as well such as

promises, streams, trees,

objects etc… Javascript has built in

array and promise objects act like functors.

For collections (arrays, streams, etc…),

.map() typically iterates over the collection and applies

the given function to each value in the collection but not all functors

iterate. Functors are really about applying a function in a specific

context.

Promises use the name .then() instead of

.map(). You can usually think of .then() as an

asynchronous .map() method except when you have a nested

promise in which case it automatically unwraps the outer

promise. Again, for values which are not

promises, .then() acts like an asynchronous

.map(). For values that are promises

themselves .then() acts like the .chain()

method from monads (sometimes also called .bind() or

.flatMap()). So, promises are not quite

functors and not quite monads but in practice you can usually treat them

as either. Don’t worry about what monads are, yet. Monads are a kind of

functor os you need to learn functors first. Lot’s of libraries exist

that will turn a variety of other things into functors too.

In Haskel, the functor type is defined as:

fmap :: ( a -> b ) f a -> f b.

Given a function that takes an a and returns a

b and a functor with zero or more a inside it:

fmap returns a box with zero or more b inside

it. The f a and f b bits can be read a “a

functor of a” and “a functor of b” meaning

f a has a indie the box and f b

has b inside the box.

Using a functor is easy - just call map():

const f = [ 1, 2, 3 ];

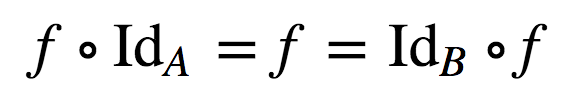

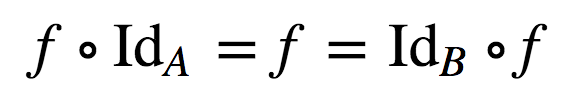

f.map( double ); // [ 2, 4, 6 ]Categories have two important properties:

Since a functor is a mapping between categories, functors must respect identity and composition. Together they’re known as the functor laws.

If you pass the identity function (x => x) into

f.map(), where f is the functor, the result

should be equivalent to f:

const f = [ 1, 2, 3 ];

f.map( x => x ); // [ 1, 2, 3 ]Functors must obey the composition law:

F.map( x => f( g ( x )) is equivalent to

F.map( g ).map( f ).

Function Composition is the application of one function to the result

of another e.g. given an x and the functions,

f and g, the composition

( f ° g )( x ) means f( g ( x )).

A lot of functional programming terms come from category theory and the essence of category theory is composition. Category theory is scary at first but easy. Like jumping off a diving board or riding a roller coaster. Here’s the foundation of category theory in a few bullet points:

a -> b -> c

there must be a composition which goes directly from

a -> c.Say you have a function g that takes an a

and returns a b and another function f that

takes a b and returns a c; there must also be

a function h that represents the composition of

f and g. So, the composition from

a -> c is the composition

f ° g (f after g). Hence,

h( x ) = f( g ( x )). Function composition works right to

left, not left to right, which is why f ° g is frequently

called f after g.

Composition is associative. Basically that means that when you’re

composing multiple functions (morphisms if you’re feeling fancy) you

don’t need parenthesis:

h ° ( g ° f ) = ( h ° g ) ° f = h ° g ° f.

Let’s take another look at the composition law in

javascript. Given a functor, F:

const F = [ 1, 2, 3 ];. The following are equivalent:

F.map( x => f( g ( x )));,

F.map( g ).map( f );.

An endofunctor is a functor that maps from a category back to the

same category. A functor can map from category to category:

X -> Y. An endofunctor maps from a category to the same

category: X -> X. A monad is an endofunctor.

Remember:

“A monad is just a monoid in the category of endofunctors. What’s the problem?”

Hopefully that quote is tarting to make a little more sense. We’ll get to monoids and monads later.

Here’s a simple example of a functor:

const Identity = value => ({

map : fn => Identity( fn( value ))

});As you can see, it satisfies the functor laws:

// trace() is a utility to let you easily inspect the content

const trace = x => {

console.log( x );

return x;

};

const u = Identity( 2 );

// Identity law

u.map( trace ); // 2

u.map( x => x ).map( trace ); // 2

const f = n => n + 1;

const = n => n * 2;

// Composition law

const r1 = u.map( x => f( g( x )));

const r2 = u.map( g ).map( f );

r1.map( trace ); // 5

r2.map( trace ); // 5Now you can map over any data type just like you can map over an

array. Nice! That’s about as simple as a functor can get in

javascript but it’s missing some features we expect

from data types in javascript. Let’s add them. Wouldn’t

it be cool if the + operator could work for number and

string values?

To make that work all we need to do is implement

.valueOf() which also seems like a convenient way to unwrap

the value from the functor:

const Identity = value => ({

map : fn => Identity( fn( value )),

valueOf : () => value

});

const ints = ( Identity( 2 ) + Identity( 4 ));

trace( ints ); // 6

const hi = ( Identity( "h" ) + Identity( "i" ));

trace( hi ); // "hi"Super. But what if we want to inspect an Identity

instance in the console? It would be cool if it would say

Identity( value ). Let’s add a .toString()

method: toString: () => `Identity( ${ value }).

We should probably also enable the standard JS iteration protocol. We can do that by adding a custom iterator:

[ Symbol.iterator ]: () => {

let first = true;

return ({

next : () => {

if( first ){

first = false;

return ({

done : false,

value

});

}

return ({

done : true

});

}

});

}Now this will work:

// [ Symbol.iterator ] enables standard JS iterations:

const arr = [ 6, 7, ...Identity( 8 )];

trace( arr ); // [ 6, 7, 8 ]What if one wants to take an Identity( n ) and return an

array of Identities containing n + 1, n + 2

and so on? Easy right?

const fRange = ( start, end ) => Array.from(

{ length : end - start + 1 },

( x, i ) => Identity( i + start )

);How do we make this work with any functor? What if we had a spec that said that each instance of a data type must have a reference to its constructor? Then you could do this:

const fRange = (

start, end

) => Array.from(

{ length : end - start + 1 },

// change `Identity` to `start.constructor`

( x, i ) => start.constructor( i + start )

);

const range = fRange( Identity( 2 ), 4 );

range.map( x => x.map( trace )); // 2, 3, 4To test if the value is a functor we could add a static method on

Identity to check. We should throw in a static

.toString() where we’re at it:

Object.assign( Identity, {

toString : () => "Identity",

is : x => typeof x.map === "function"

});Now the whole kit and caboodle:

const Identity = value => ({

map : fn => Identity( fn( value )),

valueOf : () => value,

toString : () => `Identity( ${ value })`,

[ Symbol.iterator ] : () => {

let first = true;

return ({

next : () => {

if( first ){

first = false;

return ({

done : false,

value

});

}

return ({

done : true

});

}

});

},

constructor : Identity

});

Object.assign( Identity, {

toString : () => "Identity",

is : x => typeof x.map === "function"

});Note you don’t need all this extra stuff for something to qualify as

a functor or an endofunctor. It’s strictly for convenience. All you need

for a functor is a .map() interface that staidfies the

functor laws.

Functors are great for lots of reasons. Most importantly, they’re an

abstraction that you can use to implement lots of useful things in a way

that works with any data type. For instance, what if you want to kick

off a chain of operations but only if the value inside the functor is

not undefined or null?

// Create the predicate

const exists = x => ( x.valueOf() !== undefined && x.valueOf !== null );

const ifExists = x => ({

map : fn => exists( x ) ? x.map( fn ) : x

});

const add1 = n => n + 1;

const double = n => n * 2;

// Nothing happens…

ifExists( Identity( undefined )).map( trace );

// Still nothing happens…

ifExists( Identity( null )).map( trace );

// 42

ifExists( Identity( 20 ))

.map( add1 )

.map( double )

.map( trace )

;Of course, functional programming is all about composing tiny functions to create higher level abstractions. What if you want a generic map that works with an y functor? That way you can partially apply arguments to create new functions. Easy. Pick your favourite auto-curry or use this magic spell from before:

const curry = (

f, arr = []

) => ( ..args ) => (

a => a.length === f.length ?

f( ...a ) :

curry( f, a )

)([ ...arr, ...args ]);Now we can customise map:

const map = curry(( fn, F ) => F.map( fn ));

const double = n => n * 2;

const mdouble = map( double );

mdouble( Identity( 4 )).map( trace ); // 8Functors are things we can map over. More specifically a functor is a mapping from category to category. A functor can even map from a category back to the same category (i.e. an endofucntion).

A category is a collection of objects with arrows between objects.

Arrows represent morphisms (aka functions, aka compositions). Each

object in a category has an identity morphism (x => x).

For any chain of objects A -> B -> C there must exist

a composition A -> C.

Functors are great higher-order abstractions that allow you to create a variety of generic functions that will work for any data type.

Functional mixins are composable factory function which connect together in a pipeline; each function adding some properties or behaviours like workers on an assembly line. Functional mixins don’t depend on or require a base factory or constructor: simply pass any arbitrary object into a mixin and an enhanced version of that object will be returned.

Functional mixins features:

All modern software development is really composition: we break a large, complex problem down into smaller, simpler problems, and then compose solutions to form an application.

The atomic units of composition are on of two things:

Application structure is defined by the composition of those atomic

units. Often, composite objects are produced using class inheritance,

where a class inherits the bulk of its functionality forma parent class,

and extends or overrides pieces. The problem with that approach is that

it leads to is-a thinking, e.g. “an admin is an employee”,

causing lots of design problems:

If an admin is an employee, how do you handle a situation where you hire an outside consultant to perform administrative duties temporarily? If you knew every requirement in advance, perhaps class inheritance could work, but I’ve never seen that happen. Given enough usage, applications and requirements inevitably grow and evole over time as new problems and more efficient processes are discovered.

Mixins offer a more flexible approach.

“Favour object composition over class inheritance” the Gang of Four, “Deign Patterns: Elements of Reusable Object Oriented Software:

Mixins are a form of object compositions, where component features get mixed into a composite object so that properties of each mixin become properties of the composite object.

The term “mixin” in OOP comes from mixin ice-cream shops. Instead of having a whole lot of ice-cream flavours in different pre-mixed buckets, yo have vanilla ice-cream, and a bunch of separate ingredients that could be mixed in to create custom flavours for each customer.

Object mixins are similar: you start with an empty object and mix in features to extend it. Because javascript supports dynamic object extension and objects without classes, using object mixins is trivially easy in javascript - so much so that it is the most common form if inheritance in javascript by a huge margin. Let’s have a look at an example:

const chocolate = {

hasChocolate : () => true

};

const caramelSwirl = {

hasCaramelSwirl : () => true

};

const pecans = {

hasPecans : () => true

};

const iceCream = Object.assign({}, chocolate, caramelSwirl, pecans );

/* Or if your environment supports object spread

const iceCream = { ...chocolate, ...caramelSwirl, ..pecans };

*/

console.log( `

hasChocolate : ${ iceCream.hasChocolate() }

hasCaramelSwirl : ${ iceCream.hasCaramelSwirl() }

hasPecans : ${ iceCream.hasPecans() }

` );

/* Which logs:

hasChocolate : true

hasCaramelSwirl : true

hasPecans : true

*/Functional inheritance is the process of inheriting features by applying an augmenting function toan object instance. The function supplies a closure scope which you can use to keep some data private. The augmenting function uses dynamic object extension to extend the object instance with new properties and methods.

Take a ganders at an example from Douglas Crockford, who coined the term:

// Base object factory

function base( spec ){

var that = {}; // create an empty object

that.name = spec.name; // Add it a "name" property

return that; // Return object

}

// Construct a child object, inheriting from "base"

function child( spec ){

// Create the object through the "base" constructor

var that = base( spec );

that.sayHello = function(){ // Augment that object

return "Hello, I'm " + that.name;

};

}

// Usage

var result = child({ name : "a functional object" });

console.log( result.sayHello()); // "Hello, I'm a functional object"Because child() is tightly coupled to

base(), when you add grandchild(),

greatGrandchild() etc…, you’ll opt into most of the common

problems from class inheritance.

Functional mixins are composable functions which mix new properties or behaviours with properties from a given object. Functional mixins don’t depend on or require a base factory or constructor: simply pass any arbitrary object into a mixin and it will be extended.

An example:

const flying = o => {

let isFlying = false;

return Object.assign({}, o, {

fly(){

isFlying = true;

return this;

},

isFlying : () => isFlying,

land(){

isFlying : false;

return this;

}

});

};

const bird = flying({});

console.log( bird.isFlying()); // false

consoe.log( bird.fly().isFlying()); // trueNotice that when we call flying() we need to pass an

object in to be extended. Functional mixins are designed for functional

composition. Let’s create something to compose with:

const quacking = quack => o => Object.assign({}, o, {

quack : () => quack

});

const quacker = quacking( "Quack!" )({});

console.log( quacker.quack()); // "Quack!"Functional mixins can be composed with simple function composition:

const createDuck = quack => quacking( quack )( flying({}));

const duck = createDuck( "Quack!" );

console.log( duck.fly().quack());That looks a little awkward to read, though. It can also be a bit tricky to debug or re-arrange the order of composition.

Of course, this is standard function composition, and we already know

some better ways to do that using compose() or

pipe(). If we use pipe() to reverse the

function order, the composition will read like

Object.assign({}, … ) or

{ ...object, ...spread } – preserving the same order of

precedence. In case of property collisions, the last object in wins.

const pipe = ( ...fns ) => x => fns.reduce(( y, f ) => f( y ), x );

// OR… import pipe from "lodash/fp/flow";

const createDuck = quack => pipe(

flying,

quacking( quack )

)({});

const duck = createDuck( "Quack!" );

console.log( duck.fly().quack());You should always use the simplest possible abstraction to solve the problem you’re working on. Start with a pure function. If you’re in need of an object with a persistent state, try a factory function. If you need to build more complex objects, try functional mixins.

Here are some good use-cases for functional mixins: * Application

state management, e.g. a Redux store. * Certain cross-cutting concerns

and services, e.g. a centralized logger. * Composable functional data

types, e.g. the javascript Array type

implements Semigroup, Functor and

Foldable. Some algebraic structures can be derived in terms

of other algebraic structures, meaning that certain derivations can be

composed into a new data type without customisation.

React users: class is fine for

lifecycle hooks because callers aren’t expected to use new,

and documented best-practice is to avoid inheriting from any components

other than the React-provided base components. I use and recommend HOCs

(Higher Order Components) with function composition to compose UI

components.

Most problems can be elegantly solved using pure functions. The same is not true of functional mixins. Like class inheritance, functional mixins can cause problems of their own. In fact, it’s possible to faithfully reproduce all fo the features and problems of class inheritance using functional mixins.

You can avoid that, though, using the following advice: * Use the

simplest practical implementation. Start on the left and move to the

right only as needed: pure functions > factories > functional

mixins > classes. * Avoid the creation of is-a

relationships between objects, mixins or data types. * Avoid implicit

dependencies between mixins — wherever possible, functional mixins

should be self-contained, and have no knowledge of other mixins. *

“Functional mixins” doesn’t mean “functional programming”. * There may

be side-effects when you access a property using

Object.assign() or object spread syntax

({ ... }). You’ll also skip any non-enumerable properties.

ES2017 added Object.getOwnPropertyDescriptors() to get

around this problem.

If you’re tempted to use functional mixins in any scope larger than

your own small projects, you should probably look at

stamps, instead. The Stamp Specification is a standard for

sharing and reusing composable factory functions, with built-in

mechanisms to deal with property descriptors, prototype delegation and

so on.

I rely mostly on function composition to compose behaviour and

application structure and only rarely need functional mixins or stamps.

I never use class inheritance unless I’m descending directly from a

third-party base class such as React.class. I never build

my own inheritance hierarchies.

Class inheritance is very rarely (perhaps never) the best approach in

javascript but that choice is sometimes made by a

library or framework that you don’t control. In that case, using

class is sometimes practical, provided the library: 1. Does

not require you to extend your own classes (i.e. does not require you to

build multi-level class hierarchies). 2. Does not require you to

directly use the new keyword — in other words, the

framework handles instantiation for you

Both Angular 2+ and React meet those requirements so you can safely use classes with them as long as you don’t extend you own classes. React allows you to avoid using classes if you wish, but your components may fail to take advantage of optimisations built into React’s base classes and your components won’t look like the components in documentation examples. In any case, you should always prefer the function form for React components when it makes sense.

In some browsers, classes may provide javascript

engine optimisations that are not available otherwise. In almost all

cases, those optimisations will not have a significant impact on your

apps performance. In fact, it’s possible to go many years without ever

needing to worry about class performance differences.

Object creation and property access is always very fast (millions of

ops/sec) regardless of how you build your objects.

That said, authors of general purpose utility libraries similar to

RxJS, Lodash, etc… should investigate possible performance benefits of

using class to create object instances. Unless you have

measured a significant bottleneck that you can provably and

substantially reduce using class, you should optimise for

clean, flexible code instead of worrying about performance.

You may be tempted to create functional mixins designed to work together. Imagine you want to build a configuration manager for your app that logs warnings when you try to access configuration properties that don’t exist. It’s possible to build it like this:

// in it's own module…

const withLogging = logger => o => Object.assign({}, o, {

log( text ){

logger( text )

}

});

// in a different module with no explicit mention of

// withLogging — we just assume it's there…

const withConfig = config => ( o = {

log : ( text = "" ) => console.log( text )

}) => Object.assign({}, o, {

get( key ){

return config[ key ] == undefined ?

// vvv implicit dependency here… oops! vvv

this.log( `Missing config key: ${ key }` ) :

// ^^^ implicit dependency here… oops! ^^^

config[ key ];

}

});

// in yet another module that imports withLogging and imports withLogging and withConfig…

const createConfig = ({ initialConfig, logger }) =>

pipe(

withLogging( logger ),

withConfig( initialConfig )

)({})

;

// elsewhere…

const initialConfig = {

host : "localhost"

};

const logger = console.log.bind( console );

const config = createConfig({ initialConfig, logger });

console.log( config.get( "host" )); // "localhost"

config.get( "notThere" ); // "Missing config key: notThere"However, it’s also possible to build it like this:

// import withLogging() explicitly in withConfig module

import withLogging form "./with-logging";

const addConfig = config => o => Object.assign({}, o, {

get( key ){

return config[ key ] == undefined ?

this.log( `Missing config key: ${ key }` ) :

config[ key ];

}

});

const withConfig = ({ initialConfig, logger }) => o =>

pipe(

// vvv compose explicit dependency in here vvv

withLogging( logger ),

// ^^^ compose explicit dependency in here ^^^

addConfig( initialConfig )

)( o );

// The factory only needs to know about withConfig now…

const createConfig = ({ initialConfig, logger }) =>

withConfig({ initialConfig, logger )({});

// elsewhere, in a different module…

const initialConfig = {

host : "localhost"

};

const logger = console.log.bind( console );

const config = createConfig({ initialConfig, logger });

console.log( config.get( "host" )); // "localhost"

config.get( "notThere" ); // "Missing config key: notThere"The correct choice depends on a lot of factors. It is valid to require a lifted data type for a functional mixin to act on, but if that’s the case, the API contract should be made explicitly in the function signature an API documentation.

That’s the reason that the implicit version has a default value for

o in the signature. Since javascript lack

type annotation capabilities, we can fake it by providing default

values:

const withConfig = config => ( o = {

log : ( text = "" ) => console.log( text )

}) => Object.assign({}, o, {

// …

});If you’re using Typescript or Flow, it’s probably better to declare an explicit interface for your object requirements.

“Functional” in the context of functional mixins does not always have the same purity connotations as “functional programming”. Functional mixins are commonly used in OOP style, complete with side-effects. Many functional mixins will alter the object argument you pass to them. Caveat emptor.

By the same token, some developers prefer a functional programming

style, and will maintain an identity reference to the object you pass

in. You should code your mixins and the code that uses them assuming a

random mix of both styles. That means that if you need to return the

object instance, always return this instead of a reference

to the instance object in the closure — in functional code, chances are

those are not references to the same objects. Additionally, always

assume that the object instance will be copied by assignment using

Object.assign() or { ...object, ...spread }

syntax. That means that if you set non-enumerable properties they will

probably not work on the final object:

const a = Object.defineProperty({}, "a", {

enumerable : false,

value : "a"

});

const b = {

b : "b"

};

console.log({ ...a, ...b }); // { b : "b" }Should one use functional mixins that you didn’t create in your functional code, don’t assume the code is pure. Assume that the base object may be mutated and assume that there may be side-effects & no referential transparency guarantees, i.e. it is frequently unsafe to memoize factories composed of functional mixins.

Functional mixins are composable factory functions which add

properties and behaviours to objects like stations in an assembly line.

They are a great way to compose behaviours from multiple source features

(has-a, uses-a can-do ), as opposed to inheriting all the

features of a given class (is-a).

Be aware, “functional mixins” doesn’t imply “functional programming” — it simply means, “mixins using functions”. Functional mixins can be written using a functional programming style, avoiding side-effects and preserving referential transparency, but that is not guaranteed. There may be side-effects and nondeterminism in third-party mixins.

Start with the implementation and move to more complex implementations only as required:

Functions > objects > factory functions > functional mixins > classes

A factory function is any function which is not a class or

constructor that returns a (presumably new) object. In

javascript, any function can return an object. When it

does so without the new keyword, it’s a factory

function.

Factory functions have always been attractive in

javascript because they offer the ability to easily

produce object instances without diving into the complexities of classes

and the new keyword.

Javascript provides a very handy object literal syntax. It looks something like this:

const user = {

userName : "echo",

avatar : "echo.png"

};Like JSON (which is based on javascript’s object

literal notation), the left side of the : is the property

name and the right side is the value. You can access props with dot

notation: console.log( user.userNname ); // "echo".

You can access computed property names using square bracket notation:

const key = "avatar"; console.log( user[ key ]); // "echo.png".

If you have variables in-scope with the same name as your intended

property names, you can omit the colon and the value in the object

literal creation:

const userName = "echo";

const avatar = "echo.png";

const user = {

userName,

avatar

};

console.log( user );

// { avatar : "echo.png", userName : "echo" }Object literals support concise method syntax. We can add a

.setUserName() method:

const userName = "echo";

const avatar = "echo.png";

const user = {

userName,

avatar,

setUser( userName ){

this.userName = userName;

return this;

}

};

console.log( user.setUserName( "Foo" ).userName ); // "Foo"In concise methods, this refers to the object which the

method is called on. To call a method on an object, simply access the

method using object dot notation and invoke it by using parentheses,

e.g. game.play() would apply .play() to the

game object. In order to apply a method using dot notation,

that method must be a property of the object in question. You can also

apply a method to any other arbitrary methods, .call(),

.apply() or .bind().

In this case, user.setUserName( "Foo" ) applies

.setUserName() to user, so

this === user. In the .setUserName() method,

we change the .userName property on the user

object via its this binding and return the same object

instance for method chaining.

Should you need to create many objects, you’ll want to combine the power of object literals and factory functions. With a factory function, you can create as many user objects as you want. If you’re building a chat app, for instance, you can have a user object representing the current user, and a lot a lot of other user objects representing all the other users who are currently signed in and chatting, so you can display their names and avatars, too.

Let’s turn our user object into a

createUser() factory:

const createUser = ({ userName, avatar }) => ({

userName,

avatar,

setUserName( userName ){

this.userName = userName;

return this;

}

}):

console.log( createUser({ userName : "echo", avatar : "echo.png" }));

/*

{

"avatar" : "echo.png",

"userName" : "echo",

"setUserName" : [Function setUserName]

}

*/Arrow functions, =>, have an implicit return feature:

if the function body consists of a single expression, you can omit the

return keyword: () => "foo" is a function

that takes no parameters and returns the string "foo".

Be careful when you return object literals. By default,

javascript assumes you want to create a function body

when you use braces, e.g. { broken : true }. If you want to

use an implicit return for an object literal, you’ll need to

disambiguate by wrapping the object literal in parentheses:

const noop = () => { foo : "bar" };

console.log( noop()); // undefined

const createFoo = () => ({ foo : "bar" });

console.log( createFoo()); // { foo : "bar" }In the first example, foo: is interpreted as a label,

and bar is interpreted as an expression that doesn’t get

assigned or returned. The function returns undefined.

In the createFoo() example, the parentheses force the

braces to be interpreted as an expression to be evaluated rather than a

function body block.

Pay special attention to the function signature:

const createUser = ({ userName, avatar }) => ({.

In this line, the braces ({, }) represent object

destructuring. This function takes one argument (an object) but

destructures two formal parameters from that single argument,

userName and avatar. Those parameters can then

be used as variables in the function body scope. You can also

destructure arrays:

const swap = ([ first, second ]) => [ second, first ];

console.log( swap([ 1, 2 ])); // [ 2, 1 ]And you can use the rest and spread syntax (...varName)

to gather the rest of the values from the array (or a list

of arguments), and then spread those array elements back

into individual elements:

const rotate = ([ first, ...rest ]) => [ ...rest, first ];

console.log( rotate([ 1, 2, 3 ])); // [ 2, 3, 1 ]Earlier we used square bracket computed property access notation to dynamically determine which object property to access:

const key = "avatar";

console.log( user[ key ]); // "echo.png"We can also compute the value of keys to assign to:

const arrToObj = ([ key, value ]) => ({[ key ] : value });

console.log( arrToObj([ "foo", "bar" ])); // { foo : "bar" }In this example, arrToObj takes an array

consisting of a key/value pair (aka a tuple) and converts it into an

object. Since we don’t know the name of the key, we need to compute the

property name in order to set the key/value pair on the object. For

that, we borrow the idea of square bracket notation from computed

property accessors, and reuse it in the context of building an object

literal: {[ key ] : value }.

After the interpolation is done, we end up with the final object:

{ "foo" : "bar" }.

Functions in javascript support default parameter values, which have several benefits:

1 implies that the parameter can take a member of the

Number type.Using default parameters we can document the expected interface for

our createUser factory, and automatically fill in

Anonymous details if the user’s info is not supplied:

const createUser = ({

userName = "Anonymous",

avatar = "anon.png"

} = {}) => ({

userName,

avatar

});

console.log(

// { userName : "echo", avatar : "anon.png" }

createUser({ userName : "echo" }),

// { userName : "Anonymous", avatar : "anon.png" }

createUser()

);The last = {} bit just before the parameter signature

closes means that if nothing gets passed in for this parameter, we’re

going to use empty object as the default if no argument is passed in.

When you try to destructure values from the empty object, the default

values for properties will get used automatically, because that’s what

default values do: replace undefined with some predefined

value.

Without the = {} default value,

createUser() with no arguments would throw an error because

you can’t try to access properties from undefined.

Javascript does not have any native type annotations as of this writing, but several competing formats have spring up over the years to fill the gaps, including JSDoc, Facebook’s Flow and Microsoft’s TypeScript. I use rtype for documentation — a notation I find much more readable than TypeScript for functional programming.

At the time of this writing, there is no clear winner for type annotations. None of the alternatives have been blessed by the javascript specification and there seem to be clear shortcomings in all of them.

Type inference is the process of inferring types based on the context in which they are used. In javascript, it is a very good alternative to type annotations.

If you provide enough clues for inference in your standard javascript function signatures, you’ll get most of the benefits of type annotations with none of the costs or risks.

Even if you decide to use a tool like TypeScript or Flow, you should do as much as you can with type inference, and save the type annotations for situations where type inference falls short. For example, there’s no native way in javascript to specify a shared interface. That’s both easy ans useful with TypeScript or rtype.

Tern.js is a popular type inference tool for javascript that has plugins for many code editors and IDEs.

Microsoft’s Visual Studio Code doesn’t need Tern.js because it brings the type inference capabilities of TypeScript to regular javascript code.

When you specify default parameters for functions in javascript, tools capable of type inference such as Tern.js, TypeScript and Flow can provide IDE hints to help you use the API you’re working with correctly.