A collection of tutorials and information regarding modern javascript.

Adrian Mejia

JavaScript has changed quite a bit in the last years. These are 12 new features that you can start using today!

The new additions to the language are called ECMAScript 6. It is also referred as ES6 or ES2015+.

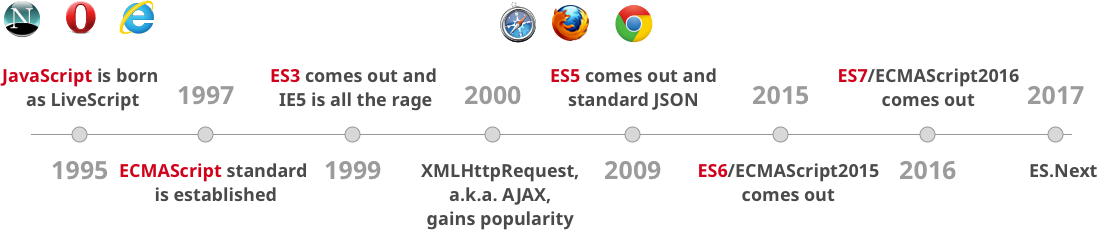

Since JavaScript conception on 1995, it has been evolving slowly. New additions happened every few years. ECMAScript came to be in 1997 to guide the path of JavaScript. It has been releasing versions such as ES3, ES5, ES6 and so on.

As you can see, there are gaps of 10 and 6 years between the ES3, ES5, and ES6. The new model is to make small incremental changes every year. Instead of doing massive changes at once like happened with ES6.

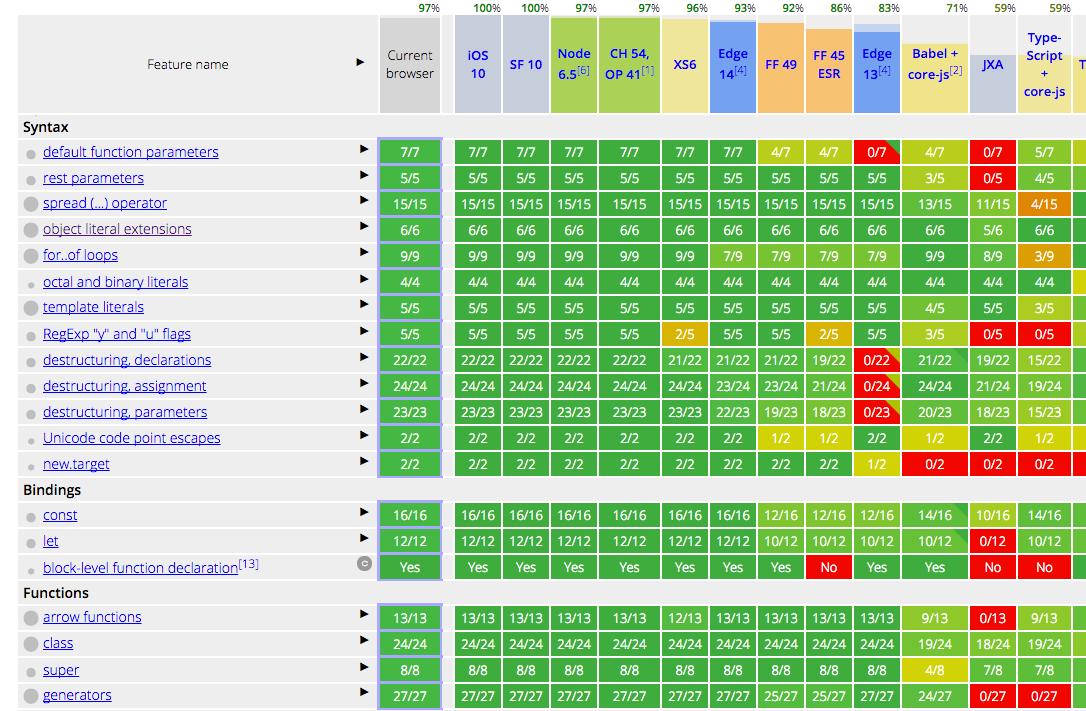

All modern browser and environments support ES6 already!

source: https://kangax.github.io/compat-table/es6/

Chrome, MS Edge, Firefox, Safari, Node and many others have already built-in support for most of the features of JavaScript ES6. So, everything that you are going to learn in this tutorial you can start using it right now.

Let’s get started with ECMAScript 6!

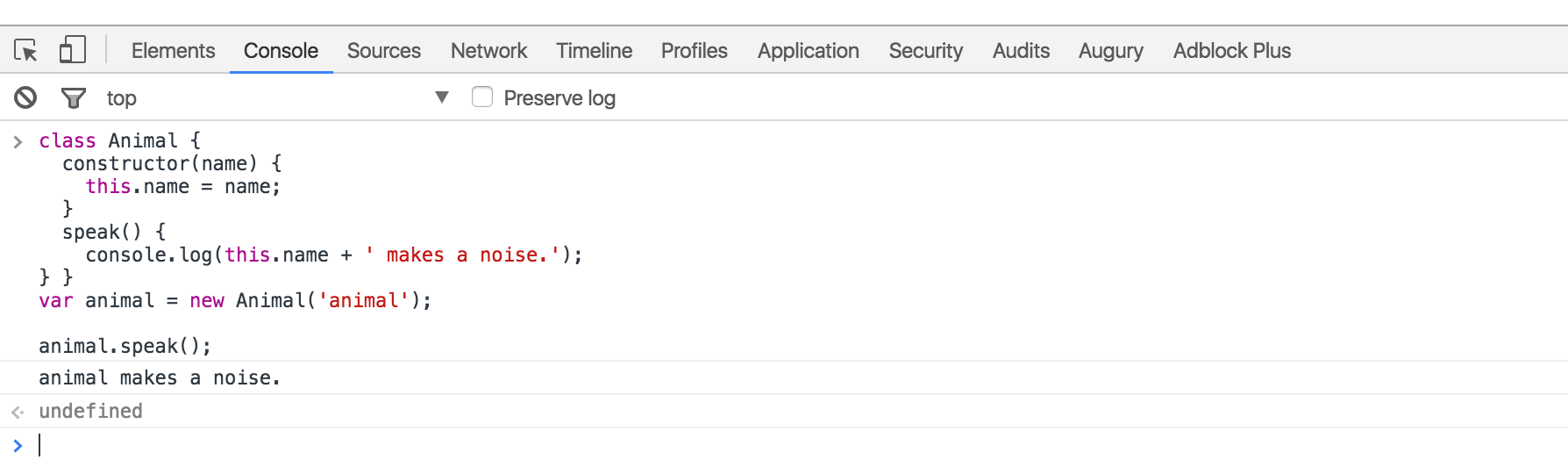

You can test all these code snippets on your browser console!

So don’t take my word and test every ES5 and ES6 example. Let’s dig in 💪

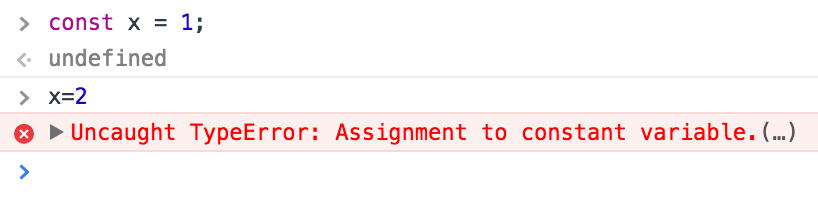

With ES6, we went from declaring variables with var to

use let/const.

What was wrong with var?

The issue with var is the variable leaks into other code

block such as for loops or if blocks.

ES5

var x = "outer";

function test(inner) {

if (inner) {

var x = "inner"; // scope whole function

return x;

}

return x; // gets redefined because line 4 declaration is hoisted

}

test(false); // undefined 😱

test(true); // innerFor test(false) you would expect to return

outer, BUT NO, you get undefined.

Why?

Because even though the if-block is not executed, the expression

var x in line 4 is hoisted.

var hoisting:

varis function scoped. It is availble in the whole function even before being declared.- Declarations are Hoisted. So you can use a variable before it has been declared.

- Initializations are NOT hoisted. If you are using

varALWAYS declare your variables at the top.- After applying the rules of hoisting we can understand better what’s happening:

ES5

var x = "outer";

function test(inner) {

var x; // HOISTED DECLARATION

if (inner) {

x = "inner"; // INITIALIZATION NOT HOISTED

return x;

}

return x;

}ECMAScript 2015 comes to the rescue:

ES6

let x = "outer";

function test(inner) {

if (inner) {

let x = "inner";

return x;

}

return x; // gets result from line 1 as expected

}

test(false); //

outertest(true); // innerChanging var for let makes things work as

expected. If the if block is not called the variable

x doesn’t get hoisted out of the block.

Let hoisting and “temporal dead zone”

- In ES6,

letwill hoist the variable to the top of the block (NOT at the top of function like ES5).- However, referencing the variable in the block before the variable declaration results in a

ReferenceError.letis blocked scoped. You cannot use it before it is declared.- “Temporal dead zone” is the zone from the start of the block until the variable is declared.

IIFE

Let’s show an example before explaining IIFE. Take a look here:

ES5

{ var private = 1; }

console.log(private); // 1As you can see, private leaks out. You need to use IIFE

(immediately-invoked function expression) to contain it:

ES5

(function(){

var private2 = 1;

})();

console.log(private2); // Uncaught ReferenceErrorIf you take a look at jQuery/lodash or other open source projects you

will notice they have IIFE to avoid polluting the global environment and

just defining on global such as _, $ or

jQuery.

On ES6 is much cleaner, We also don’t need to use IIFE anymore when

we can just use blocks and let:

ES6

{ let private3 = 1; }

console.log(private3); // Uncaught ReferenceErrorYou can also use const if you don’t want a variable to

change at all.

Bottom line: ditch

varforletandconst.

- Use

constfor all your references; avoid usingvar.- If you must reassign references, use

letinstead ofconst.

We don’t have to do more nesting concatenations when we have template literals. Take a look:

ES5

var first = "Adrian";

var last = "Mejia";

console.log("Your name is " + first + " " + last + ".");Now you can use backtick (`) and string interpolation

${}:

ES6

const first = "Adrian";

const last = "Mejia";

console.log(`Your name is ${first} ${last}.`);We don’t have to concatenate strings + \n anymore like

this:

ES5

var template = "<li *ngFor="let todo of todos" [ngClass]="{completed: todo.isDone}" >\n"

+" <div class="view">\n"

+" <input class="toggle" type="checkbox" [checked]="todo.isDone">\n"

+" <label></label>\n"

+" <button class="destroy"></button>\n"

+" </div>\n"

+" <input class="edit" value="">\n"

+"</li>";

console.log(template);On ES6 we can use the backtick again to solve this:

ES6

const template = `<li *ngFor="let todo of todos" [ngClass]="{completed: todo.isDone}">

<div class="view">

<input class="toggle" type="checkbox" [checked]="todo.isDone">

<label></label>

<button class="destroy"></button>

</div>

<input class="edit" value="">

</li>`;

console.log(template);Both pieces of code will have exactly the same result.

ES6 desctructing is very useful and consise. Follow this examples:

Getting elements from an arrays

ES5

var array = [1, 2, 3, 4];

var first = array[0];

var third = array[2];

console.log(first, third); // 1 3Same as:

ES6

const array = [1, 2, 3, 4];

const [first, ,third] = array;

console.log(first, third); // 1 3Swapping values

ES5

var a = 1;

var b = 2;

var tmp = a;

a = b;

b = tmp;

console.log(a, b); // 2 1same as

ES6

let a = 1;

let b = 2;

[a, b] = [b, a];

console.log(a, b); // 2 1ES5

function margin() {

var left = 1, right = 2, top = 3, bottom = 4;

return {

left: left,

right: right,

top: top,

bottom: bottom

};

}

var data = margin();

var left = data.left;

var bottom = data.bottom;

console.log(left, bottom); // 1 4In line 3, you could also return it in an array like this (and save some typing):

return [left, right, top, bottom];but then, the caller needs to think about the order of return data.

var left = data[0];

var bottom = data[3];With ES6, the caller selects only the data they need (line 6):

ES6

function margin() {

const left = 1, right = 2, top = 3, bottom = 4;

return {

left,

right,

top,

bottom

};

}

const { left, bottom } = margin();

console.log(left, bottom); // 1 4Notice: Line 3, we have some other ES6 features going on. We

can compact { left: left } to just { left }.

Look how much concise it is compare to the ES5 version. Isn’t that

cool?

ES5

var user = {firstName: "Adrian", lastName: "Mejia"};

function getFullName(user) {

var firstName = user.firstName;

var lastName = user.lastName;

return firstName + " " + lastName;

}

console.log(getFullName(user)); // Adrian MejiaSame as (but more concise):

ES6

const user = {firstName: "Adrian", lastName: "Mejia"};

function getFullName({ firstName, lastName }) {

return `${firstName} ${lastName}`;

}

console.log(getFullName(user)); // Adrian MejiaES5

function settings() {

return {

display: { color: "red" },

keyboard: { layout: "querty"}

};

}

var tmp = settings();

var displayColor = tmp.display.color;

var keyboardLayout = tmp.keyboard.layout;

console.log(displayColor, keyboardLayout); // red quertySame as (but more concise):

ES6

function settings() {

return {

display: { color: "red" },

keyboard: { layout: "querty"}

};

}

const {

display: { color: displayColor },

keyboard: { layout: keyboardLayout }

} = settings();

console.log(displayColor, keyboardLayout); // red quertyThis is also called object destructing.

As you can see, destructing is very useful and encourages good coding styles.

Best practices:

- Use array destructing to get elements out or swap variables. It saves you from creating temporary references.

- Don’t use array destructuring for multiple return values, instead use object destructuring

With ECMAScript 6, We went from “constructor functions” 🔨 to “classes” 🍸.

In JavaScript every single object has a prototype, which is another object. All JavaScript objects inherit their methods and properties from their prototype.

In ES5, we did Object Oriented programming (OOP) using constructor functions to create objects as follows:

ES5

var Animal = (function () {

function MyConstructor(name) {

this.name = name;

}

MyConstructor.prototype.speak = function speak() {

console.log(this.name + " makes a noise.");

};

return MyConstructor;

})();

var animal = new Animal("animal");

animal.speak(); // animal makes a noise.In ES6, we have some syntax sugar. We can do the same with less

boiler plate and new keywords such as class and

constructor. Also, notice how clearly we define methods

constructor.prototype.speak = function () vs

speak():

ES6

class Animal {

constructor(name) {

this.name = name;

}

speak() {

console.log(this.name + " makes a noise.");

}

}

const animal = new Animal("animal");

animal.speak(); // animal makes a noise.As we saw, both styles (ES5/6) produces the same results behind the scenes and are used in the same way.

Best practices:

- Always use

classsyntax and avoid manipulating theprototypedirectly. Why? because it makes the code more concise and easier to understand.- Avoid having an empty constructor. Classes have a default constructor if one is not specified.

Building on the previous Animal class. Let’s say we want

to extend it and define a Lion class

In ES5, It’s a little more involved with prototypal inheritance.

ES5

var Lion = (function () {

function MyConstructor(name){

Animal.call(this, name);

} // prototypal inheritance

MyConstructor.prototype = Object.create(Animal.prototype);

MyConstructor.prototype.constructor = Animal;

MyConstructor.prototype.speak = function speak() {

Animal.prototype.speak.call(this);

console.log(this.name + " roars 🦁");

};

return MyConstructor;

})();

var lion = new Lion("Simba");

lion.speak(); // Simba makes a noise.// Simba roars.I won’t go over all details but notice:

Animal constructor with the

parameters.Lion prototype to

Animal‘s prototype.speak method from the parent class

Animal.In ES6, we have a new keywords extends and

super .

ES6

class Lion extends Animal {

speak() {

super.speak();

console.log(this.name + " roars 🦁");

}

}

const lion = new Lion("Simba");

lion.speak(); // Simba makes a noise.// Simba roars.Looks how legible this ES6 code looks compared with ES5 and they do exactly the same. Win!

Best practices:

- Use the built-in way for inherintance with

extends.

We went from callback hell 👹 to promises 🙏

ES5

function printAfterTimeout(string, timeout, done){

setTimeout(function(){

done(string);

}, timeout);

}

printAfterTimeout("Hello ", 2e3, function(result){

console.log(result); // nested callback

printAfterTimeout(result + "Reader", 2e3, function(result){

console.log(result);

});

});We have one function that receives a callback to execute when is

done. We have to execute it twice one after another. That’s

why we called the 2nd time printAfterTimeout in the

callback.

This can get messy pretty quickly if you need a 3rd or 4th callback. Let’s see how we can do it with promises:

ES6

function printAfterTimeout(string, timeout){

return new Promise((resolve, reject) => {

setTimeout(function(){

resolve(string);

}, timeout);

});

}

printAfterTimeout("Hello ", 2e3).then((result) => {

console.log(result);

return printAfterTimeout(result + "Reader", 2e3);

}).then((result) => {

console.log(result);

});As you can see, with promises we can use then to do

something after another function is done. No more need to keep nesting

functions.

ES6 didn’t remove the function expressions but it added a new one called arrow functions.

In ES5, we have some issues with this:

ES5

var _this = this; // need to hold a reference

$(".btn").click(function(event){ _this.sendData(); // reference outer this

});

$(".input").on("change",function(event){

this.sendData(); // reference outer this

}.bind(this)); // bind to outer thisYou need to use a temporary this to reference inside a

function or use bind. In ES6, you can use the arrow

function!

ES6

// this will reference the outer one

$(".btn").click((event) => this.sendData());// implicit returns

const ids = [291, 288, 984];

const messages = ids.map(value => `ID is ${value}`);We went from for to forEach and then to

for...of:

ES5

// for

var array = ["a", "b", "c", "d"];

for (var i = 0; i < array.length; i++) {

var element = array[i];

console.log(element);

}// forEach

array.forEach(function (element) {

console.log(element);

});The ES6 for…of also allow us to do iterations.

ES6

// for ...of

const array = ["a", "b", "c", "d"];

for (const element of array) {

console.log(element);

}We went from checking if the variable was defined to assign a value

to default parameters. Have you done something like this

before?

ES5

function point(x, y, isFlag){

x = x || 0; y = y || -1;

isFlag = isFlag || true;

console.log(x,y, isFlag);

}

point(0, 0) // 0 -1 true 😱

point(0, 0, false) // 0 -1 true 😱😱

point(1) // 1 -1 truepoint() // 0 -1 trueProbably yes, it’s a common pattern to check is the variable has a value or assign a default. Yet, notice there are some issues:

0, 0 and get 0, -1false but get true.If you have a boolean as a default parameter or set the value to zero, it doesn’t work. Do you know why??? I’ll tell you after the ES6 example ;)

With ES6, Now you can do better with less code!

ES6

function point(x = 0, y = -1, isFlag = true){

console.log(x,y, isFlag);

}

point(0, 0) // 0 0 true

point(0, 0, false) // 0 0 false

point(1) // 1 -1 true

point() // 0 -1 trueNotice line 5 and 6 we get the expected results. The ES5 example

didn’t work. We have to check for undefined first since

false, null, undefined and

0 are falsy values. We can get away with numbers:

ES5

function point(x, y, isFlag){

x = x || 0;

y = typeof(y) === "undefined" ? -1 : y;

isFlag = typeof(isFlag) === "undefined" ? true : isFlag;

console.log(x,y, isFlag);

}

point(0, 0) // 0 0 true

point(0, 0, false) // 0 0 false

point(1) // 1 -1 true

point() // 0 -1 trueNow it works as expected when we check for

undefined.

We went from arguments to rest parameters and spread operator.

On ES5, it’s clumpsy to get an arbitrary number of arguments:

ES5

function printf(format) {

var params = [].slice.call(arguments, 1);

console.log("params: ", params);

console.log("format: ", format);

}

printf("%s %d %.2f", "adrian", 321, Math.PI);We can do the same using the rest operator ....

ES6

function printf(format, ...params) {

console.log("params: ", params);

console.log("format: ", format);

}

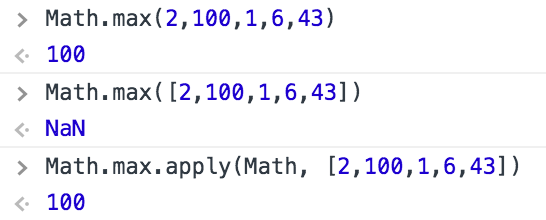

printf("%s %d %.2f", "adrian", 321, Math.PI);We went from apply() to the spread operator. Again we

have ... to the rescue:

Reminder: we use

apply()to convert an array into a list of arguments. For instance,Math.max()takes a list of parameters, but if we have an array we can useapplyto make it work.

As we saw in earlier, we can use apply to pass arrays as

list of arguments:

ES5

Math.max.apply(Math, [2,100,1,6,43]) // 100In ES6, you can use the spread operator:

ES6

Math.max(...[2,100,1,6,43]) // 100Also, we went from concat arrays to use spread

operator:

ES5

var array1 = [2,100,1,6,43];

var array2 = ["a", "b", "c", "d"];

var array3 = [false, true, null, undefined];

console.log(array1.concat(array2, array3));In ES6, you can flatten nested arrays using the spread operator:

ES6

const array1 = [2,100,1,6,43];

const array2 = ["a", "b", "c", "d"];

const array3 = [false, true, null, undefined];

console.log([...array1, ...array2, ...array3]);JavaScript has gone through a lot of changes. This article covers most of the core features that every JavaScript developer should know. Also, we cover some best practices to make your code more concise and easier to reason about.

If you think there are some other MUST KNOW feature let me know in the comments below and I will update this article.

Now you can set a variable key’s value within an object literal declaration.

// old way - myKey used after the object declaration

const myKey = "key3";

const obj = {

key1 : "One",

key2 : "Two"

};

obj[ myKey ] = "Three";

// new way

const obj2 = {

key1 : "One",

key2 : "Two",

[ myKey ] : "Three"

};A simple way to write anonymous functions and great for one liners and simple predicates.

const calculateTotal = total => total * 1.1;

calculateTotal( 10 ) // 11

// Cancel an event - another tiny task

const brickEvent = e => e.preventDefault();

document.querySelector( "div" ).addEventListener( "click", brickEvent );As you can see from the examples arrow functions utilise implicit returns and a massive reduction of syntax like curly braces and parenthesis.

These two functions are an expanded indexOf() which

allows you to specify methods to calculate the desired item

condition.

const ages = [ 12, 19, 6, 4 ];

const firstAdult = ages.find( age => age >= 18 ); // 19

const firstAdultIndex = ages.findIndex( age => age >= 18 ) // 1The spread operator signals that an array or iterable object should have its contents split into separate arguments within a call.

// Pass to function that expects separate multiple arguments

const numbers = [ 9, 4, 7, 1 ];

Math.min( ...numbers ); // 1

// Convert NodeList to Array

const divArray = [ ...document.querySelectorAll( "div" )];

// Convert Arguments to Array

const argsArray = [ ...arguments ];In the past developers have had to use workarounds to convert things like NodeLists into usable arrays with all the methods available.

Developers are used to generating strings with concatenation when

variables are part of the output. You are probably familiar with

something like

console.log( "This is the result : " + result );. Template

literals bring a new syntax to write such statements.

// Multiline String

const myString = `Hello

I'm a new line`; // no error

// Basic interpolation

const obj = { x : 1, y : 2 };

console.log( `You total is: ${ obj.x + obj.y }` ); // your total is: 3Developers used to other languages might be perplexed by the lack of default parameters in javascript. In other languages a function will not run unless all the arguments, with the correct type, is supplied when called but not javascript. Now with ES6 one can provide default arguments that will be used if not supplied when the function is called.

// Basic usage

function greet( name = "Anon" ){

console.log( `Hello ${ name }!` );

}

greet() // Hello Anon!

// You can have a function too!

function greet( name = "Anon", callback = function(){}){

console.log( `Hello ${ name }!` );

// No more "callback && callback()" (no conditional)

callback();

}And there we have it. Only small features but the type that you will use all the time and wonder why it’s taken this long for them to appear. Enjoy.

A Tutorial and discussion about three dots in ES6

Workarounds to utilise the arguments object within an internal function and mapping it to a proper array allowing array-style functions

function outerFunction() {

// store arguments into a separated variable

var argsOuter = arguments;

function innerFunction() {

// args is an array-like object

var even = Array.prototype.map.call(argsOuter, function(item) {

// do something with argsOuter

return item;

});

console.log('The arguments accessed in another function in an array structure', even);

}

innerFunction();

}

outerFunction([{

'test' : 'ming face',

'best' : 'ming taste'

}, 'a string of finest strung', 78, [1,2,3,4,5]

]);The current ES5 way to merge arrays without having to iterate over each item and push

var fruits = ['banana'];

var moreFruits = ['apple', 'orange'];

Array.prototype.push.apply(fruits, moreFruits);

console.log('A merged array using ES5-styled Array.prototype.slice.call', fruits);

// => ['banana', 'apple', 'orange']function countArguments(...args) {

return args.length;

}

console.log('How many arguments are we passing? : ', countArguments('welcome', 'to', 'Earth'));let cold = ['autumn', 'winter'];

let warm = ['spring', 'summer'];

// construct an array

console.log([...cold, ...warm]);

//destruct an array

let otherSeasons, autumn, ming;

// with deconstruction, the first two items in the cold array are set to the variables autumn and ming while the rest of the entries are passed to otherSeasons. Now, this final invocation yields an empty array as there are only two items in the cold array.

[autumn, ming, ...otherSeasons] = cold;

console.log(otherSeasons, autumn, ming);

// function arguments from an array

cold.push(...warm);

console.log(cold);

// Improved parameters access

// Rest parameter

function sumOnlyNumbers() {

var args = arguments;

var numbers = filterNumbers();

return numbers.reduce((sum, element) => sum + element);

function filterNumbers() {

return Array.prototype.filter.call(args, element => typeof element === 'number');

}

}This is a rewritten version using the three dots to set the arguments object to a parameter named args. Since there is no naming conflict with the arguments object of the inner function we can use args in both functions. Also worth noting is that args is an array so we can call filter directly without using Array.prototype.filter.call(uh, uh)

function sumOnlyNumbers(...args) {

let numbers = filterNumbers();

return numbers.reduce((sum, element) => sum + element);

function filterNumbers() {

return args.filter(element => typeof element === 'number');

}

}

console.log(sumOnlyNumbers(1, 'Hello', 5, false, {'obj' : 'Not a number'}, ['also', 'not', 'a', 'number'], 'Answer should be 6'));A final note about the ‘rest’ parameter. It’s unlikely that all of your functions take no additional named parameters so ensure that the ‘rest’ parameter comes last of all when declaring your function.

In this example, the first parameter is assigned to ‘type’ and the rest are part of the ‘items’ array. We then return an array of items that match the type. The items array does not contain the first param : that is assigned to type only.

function filter(type, ...items) {

return items.filter(item => typeof item === type);

}

console.log(filter('boolean', true, 0, false));

console.log(filter('number', 9, false, 'Fifty', '90', 60, 459));(function() {

let outerArguments = arguments;

const concat = (...items) => {

console.log(arguments === outerArguments) // => true

return items.reduce((result, item) => result + item, '');

};

console.log(concat(1, 5, 'nine')); // => '15nine'

})();An example of the .apply() method of ES5

let tcountries = ['Moldova', 'Ukraine'];

tcountries.push.apply(tcountries, ['USA', 'Japan']);

console.log(tcountries);

// => mol, ukr, usa, japónYou can see the redundancy of supplying the array as part of the apply() method. Using the ‘spread’ operator we could do it like this :

let countries = ['Moldova', 'Ukraine'];

countries.push(...['USA', 'Japan']);

countries.push('tUSA', 'tJapan');

console.log(countries);This example is a little trite as witnessed above. One can simply push multiple items outside of the array structure. I guess this becomes more useful when one has many items within an existing array.

class King {

constructor(name, country) {

this.name = name;

this.country = country;

}

getDescription() {

return `${this.name} leads ${this.country}`;

}

}You cannot do this with apply : spread operator configures the constructor invocation arguments from an array

var details = ['Alexander the Great', 'Greece'];

var Alexander = new King(...details);

console.log(Alexander.getDescription());

// You can mix spread operators and regular arguments together, observe

var numbers = [1, 2];

var evenNumbers = [4, 8];

const zero = 0;

numbers.splice(0, 2, ...evenNumbers, zero);

console.log(numbers); // => 4, 8, 0// create array with initial elements from another array :

var initial = [0, 1];

var numbers1 = [...initial, 5, 7];

let numbers2 = [4, 8, ...initial];

console.log(numbers2);

// concatenate 2 or more arrays :

var odds = [1, 5, 7];

var evens = [4, 6, 8];

var all = [...odds, ...evens];

console.log(all); // concatenates odds and evens into all

// Clone an array instance :

var words = ['Hi', 'Hello', 'Good day'];

var otherWords = [...words];

console.log(otherWords); // words array

console.log(otherWords === words) // false

// So it clones the array but not 'on the contained elements' ; it's not a deep clone (?)Destructing assignments are powerful expressions to extract data from arrays and objects

var seasons = ['invierno', 'primavera', 'verano', 'otoño'];

var coldSeason, otherSeason;

[coldSeason, ...otherSeason] = seasons;

console.log('Cold : ', coldSeason, 'Others : ', otherSeason);The spread operator can extract data from any object or primitive that is iterable and adheres to the iterable protocol…

var str = 'hi';

var iterator = str[Symbol.iterator]();

console.log(iterator.toString()) // => '[object String Iterator]'

console.log(iterator.next());

console.log(iterator.next());

console.log(iterator.next());

console.log([...str]);

// you can see that the string variable has the iteration protocol that calls next() until done(). This allows us to store the characters of the string in an array using the spread operator similar to [].explode();

function ito() {

var index = 0;

return {

next : () => ({ // Conform to Iterator Protocol

done : index >= this.length,

value : this[index++]

})

};

}

var arrayLike = {

0 : 'Cat',

1 : 'Bird',

length : 2

};

arrayLike[Symbol.iterator] = ito;

var array = [...arrayLike];

console.log(array); // => boom? BOOM! Note, the tutorial suggested the function above be called iterator but this failed me. This only worked by changing the function name to ito, eh.ES 2017 introduces two new Object methods. Let’s explore. In javascript an object is a collection of related data that is stored in key/value pairs. We will work with this object in this guide:

const population = {

tokyo : 37833000,

delhi : 24953000,

shangahi : 22991000

};The entries on the left (city names) are the keys whilst the right entries (populations) are the values.

This is not a new method in ES 2017 but quite handy.

Object.keys() iterates over all the keys in the object. It

works like so:

Object.keys( population );

// [ 'tokyo', 'delhi', 'shangai' ];The very same as Object.keys but, claro, it will return

the values rather than the keys. Once more:

Object.values( population );

// [ 37833000, 24953000, 22991000 ]Now heres a thing. Why not return both bits of data in the object?

This should prove useful when iterating over an object as one can use

the lovely Array methods that make such traversals easy and

easier to read. The resulting output will be an array of arrays:

Object.entries( population );

// [[ 'tokyo', 37833000 ], [ 'delhi', 2453000 ], [ 'shanghai', 22991000 ]]And that’s a wrap! Very easy but could prove very useful. Go forth and use these methods when you see fit.

Before we begin we must understand what an iterator is. Let’s summarise the key points:

next() method

which returns the next item in the sequence.This method returns a new Array Iterator object

containing the keys for each item in the array. It can

be advanced with next():

let arr = [ 'a', 'b', 'c' ];

let iterator = arr.keys();

iterator.next(); // { value : 0, done : false }

iterator.next(); // { value : 1, done : false }

iterator.next(); // { value : 2, done : false }

iterator.next(); // { value : undefined, done : true }This method works similarly as Array.keys but bare in

mind it isn’t well supported in browsers as yet:

let arr = [ 'a', 'b', 'c' ];

let iterator = arr.values();

iterator.next().value(); // a

iterator.next().value(); // b

iterator.next().value(); // c

iterator.next().value(); // undefinedAs you may have guessed this method returns an object with both the

key and the value that can be iterated

using the next() method:

let arr = [ 'a', 'b', 'c' ];

let iterator = arr.entries();

iterator.next().value(); // [ 0, 'a' ]

iterator.next().value(); // [ 1, 'b' ]

iterator.next().value(); // [ 2, 'c' ]

iterator.next().value(); // undefinedFin. Wait a minute. This doesn’t seem particularly useful compared to

the Object.entries method but I’m sure one can find uses to

programatically iterate through an array with access to the

keys and values. I can’t think of one

off the top of my head but I’m sure they exist.

A brief lesson on two ES6 Array methods;

Array.find and Array.findIndex.

The Array.find() method returns the first element in an

array that passes a given test. The rules that govern this are as

follows :

find() method executes a callback function once for

each element in the array until it finds a value that matches the

predicate.find() does not mutate or change the original

array.A basic example to get us up and running:

const arr = [ "a", "b", "c" ];

arr.find( k => k == "b" );

// "b"We pass an anonymous function to find() with the

predicate item equals character “b”. The function then iterates over the

array checking if each entry is equal to “b”. In this case the second

entry matches the condition and the value is returned.

For our next example we will attempt to extract an odd number from an array of all-but-one even numbered array item values.

const arr = [ 2, 4, 6, 8, 9, 10, 12, 14 ];

function isOdd( i ){

return i % 2 != 0;

}

arr.find( isOdd );

// 9All pretty straightforward I trust. In this case we pass in a named

function to find() that checks for an odd number. This

function accepts one parameter which will be the array item.

This method is exactly the same as the find() method in

deed and intention but will of course return the key rather than the

value. Should the method prove false then -1 is returned to

denote nothing was found; similar to indexOf().

Using the same example as above, searching for an item with the value

“b”, we pass the same anonymous function to findIndex() and

get the index in return:

const arr = [ "a", "b", "c" ];

arr.findIndex( k => k == "b" );

// 1 Let’s use the same example again for example 2, what will the answer be?

const arr = [ 2, 4, 6, 8, 9, 10, 12, 14 ];

function isOdd( i ){

return i % 2 != 0;

}

arr.findIndex( isOdd );

// 4And that’s it for Array.find() and

Array.findIndex().

ES 2017 introduced asynchronous functions. Async functions are

essentially a cleaner way to work with asynchronous code in

javascript. In order to understand exactly what these

are, and how they work, we first need to understand Promises.

Promises and

callbacks).Promises.Promise. If the function

throws an error, the Promise will be rejected. If the

function returns a value the Promise will be resolved.Writing an async function is quite simple. You just need to add the

async keyword prior to function:

// Normal function

function add( x, y ){

return x + y;

}

// Async function

async function add( x ,y ){

return x + y;

}Async functions can make use of the await expression.

This will pause the async function and wait for the

Promise to resolve prior to moving on.

You have the gist of it so let’s try it out. First we’re going to

create some code using Promises. Once we’ve got something

working we’ll rewrite our function using async/await so you can see just

how much simpler it is…

function doubleAfter2Seconds( x ){

return new Promise( resolve => {

setTimeout(() => {

resolve( x * 2 );

}, 2000 );

});

}In this code we have a function called

doubleAfter2Seconds. This function will take a number as

input and will resolve two seconds later with the number doubled.

We can invoke our function and pass in in the number 10

to try it out. To do this, we’ll call our function while passing in

10. Then, after the Promise has resolved,

we’ll take our returned value and log it to the console. Here’s what

this would look like:

doubleAfter2Seconds( 10 ).then( r => { console.log( r ); });

Super! But what if we want to run a few different values through our function and add the result? Unfortunately we can’t simply add our invocations together and log them:

let sum = doubleAfter2Seconds( 10 ) +

doubleAfter2Seconds( 20 ) +

doubleAfter2Seconds( 30 );

console.log( sum ); // undefinedThe problem with the above code is it doesn’t actually wait for our

Promises to resolve before logging to the console. One

possible solution if to set up a promise chain. To do this we’ll create

a new function called addPromise. Our function will take an

input value and will return a Promise. Here’s what the

boilerplate code looks like:

function addPromise( x ){

return new Promise( resolve => {

// Code goes here…

// resolve()

});

}Great. Now we can add in our calls to our

doubleAfter2Seconds function Once we’re done, we can

resolve with our new sum. In this example we should be returning

x + 2*a + 2*b + 2*c.

function addPromise( x ){

return new Promise( resolve => {

doubleAfter2Seconds( 10 ).then( a => {

doubleAfter2Seconds( 20 ).then( b => {

doubleAfter2Seconds( 30 ).then( c => {

resolve( x + a + b + c );

})

})

})

});

}Yikes! Isn’t that the type of horrible, nested crap modern javascript was supposed to move away from? And what is actually going on here?

addPromise. This function

accepts one parameter.new Promise that we’ll be

returning. Note that for the sake of simplicity we’re not handling

rejections/errors.doubleAfter2Seconds. The first

call takes 10 as its parameter and two seconds later the

value of 20 is returned to the next function

(.then) in the form of the parameter a.20, 30 returning 40, 60 respectively.doubleAfter2Seconds we then

resolve the Promise and return the sum of all the numbers

processed (130).Promises to Async/AwaitEven that small example was horrible to write wasn’t it? Remove the

addPromise function and create a new function called

addAsync. This function will have the exact same purpose as

our addPromise did.

async function addAsync( x ){

const a = await doubleAfter2Seconds( 10 );

const b = await doubleAfter2Seconds( 20 );

const c = await doubleAfter2Seconds( 30 );

return x + a + b + c;

}Huh. That’s the summation of the refactor. I must say I’m disappointed. It would seem to be a bizarrely specific function that doesn’t have much use. Let’s try and rewrite it to be a bit more useful.

I tried rewriting it using Array methods but it turns

out one can’t use async/await in this fashion. For example, using

.map() would allow one to pass any function to the

parent function and use it as the .map() parameter prefixed

with await. This seems logical to me but alas all that is

mapped are the individual Promises. They do resolve but one

can’t grab the results in order to then .reduce() the

values to a total. So maybe you have to pass an async function that then

calls the actual function you want to run (although I did that with an

async arrow function). This all begins to sound like unnecessary

complexity for something very simple. Actually, you know, thinking about

it none of this makes sense. Using .map() of course expects

synchronous behaviour as you are moving sequentially through an

array. Bleh.

Sometimes modern Javascript projects get out of hand. A major culprit in this can be the messy handling of asynchronous tasks, leading to long, complex, and deeply nested blocks of code. Javascript now provides a new syntax for handling these operations, and it can turn even the most convoluted asynchronous operations into concise and highly readable code.

First a brief bit of history. In the late 1990s, Ajax was the first major breakthrough in asynchronous Javascript. This technique allowed websites to pull and display new data after the HTML had been loaded, a revolutionary idea at a time when most websites would download the entire page again to display a content update. The technique (popularized in name by the bundled helper function in jQuery) dominated web-development for all of the 2000s, and Ajax is the primary technique that websites use to retrieve data today, but with XML largely substituted for JSON.

When NodeJS was first released in 2009, a major focus of the server-side environment was allowing programs to gracefully handle concurrency. Most server-side languages at the time handled I/O operations by blocking the code completion until the operation had finished. Nodejs instead utilized an event-loop architecture, such that developers could assign “callback” functions to be triggered once non-blocking asynchronous operations had completed, in a similar manner to how the Ajax syntax worked.

A few years later, a new standard called “Promises” emerged in both NodeJS and browser environments, offering a powerful and standardized way to compose asynchronous operations. Promises still used a callback based format, but offered a consistent syntax for chaining and composing asynchronous operations. Promises, which had been pioneered by popular open-source libraries, were finally added as a native feature to Javascript in 2015.

Promises were a major improvement, but they still can often be the cause of somewhat verbose and difficult-to-read blocks of code.

Now there is a solution.

Async/await is a new syntax (borrowed from .NET and C#) that allows us to compose Promises as though they were just normal synchronous functions without callbacks. It’s a fantastic addition to the Javascript language, added last year in Javascript ES7, and can be used to simplify pretty much any existing JS application.

We’ll be going through a few code examples.

No libraries are required to run these examples. Async/await is fully supported in the latest versions of Chrome, Firefox, Safari, and Edge, so you can try out the examples in your browser console. Additionally, async/await syntax works in Nodejs version 7.6 and higher, and is supported by the Babel and Typescript transpilers, so it can really be used in any Javascript project today.

If you want to follow along on your machine, we’ll be using this dummy API class. The class simulates network calls by returning promises which will resolve with simple data 200ms after being called.

class Api {

constructor () {

this.user = { id: 1, name: 'test' }

this.friends = [ this.user, this.user, this.user ]

this.photo = 'not a real photo'

}

getUser () {

return new Promise((resolve, reject) => {

setTimeout(() => resolve(this.user), 200)

})

}

getFriends (userId) {

return new Promise((resolve, reject) => {

setTimeout(() => resolve(this.friends.slice()), 200)

})

}

getPhoto (userId) {

return new Promise((resolve, reject) => {

setTimeout(() => resolve(this.photo), 200)

})

}

throwError () {

return new Promise((resolve, reject) => {

setTimeout(() => reject(new Error('Intentional Error')), 200)

})

}

}Each example will be performing the same three operations in sequence: retrieve a user, retrieve their friends, retrieve their picture. At the end, we will log all three results to the console.

Here is an implemention using nested promise callback functions.

function callbackHell () {

const api = new Api()

let user, friends

api.getUser().then(function (returnedUser) {

user = returnedUser

api.getFriends(user.id).then(function (returnedFriends) {

friends = returnedFriends

api.getPhoto(user.id).then(function (photo) {

console.log('callbackHell', { user, friends, photo })

})

})

})

}This probably looks familiar to anyone who has worked on a Javascript project. The code block, which has a reasonably simple purpose, is long, deeply nested, and ends in this…

})

})

})

}In a real codebase, each callback function might be quite long, which can result in huge and deeply indented functions. Dealing with this type of code, working with callbacks within callbacks within callbacks, is what is commonly referred to as “callback hell”.

Even worse, there’s no error checking, so any of the callbacks could fail silently as an unhandled promise rejection.

Let’s see if we can do any better.

function promiseChain () {

const api = new Api()

let user, friends

api.getUser()

.then((returnedUser) => {

user = returnedUser

return api.getFriends(user.id)

})

.then((returnedFriends) => {

friends = returnedFriends

return api.getPhoto(user.id)

})

.then((photo) => {

console.log('promiseChain', { user, friends, photo })

})

}One nice feature of promises is that they can be chained by returning another promise inside each callback. This way we can keep all of the callbacks on the same indentation level. We’re also using arrow functions to abbreviate the callback function declarations.

This variant is certainly easier to read than the previous, and has a better sense of sequentiality, but is still very verbose and a bit complex looking.

What if it were possible to write it without any callback functions? Impossible? How about writing it in 7 lines?

async function asyncAwaitIsYourNewBestFriend () {

const api = new Api()

const user = await api.getUser()

const friends = await api.getFriends(user.id)

const photo = await api.getPhoto(user.id)

console.log('asyncAwaitIsYourNewBestFriend', { user, friends, photo })

}Much better. Calling “await” in front of a promise pauses the flow of the function until the promise has resolved, and assigns the result to the variable to the left of the equal sign. This way we can program an asynchronous operation flow as though it were a normal synchronous series of commands.

I hope you’re as excited as I am at this point.

Note that “async” is declared at the beginning of the function declaration. This is required and actually turns the entire function into a promise. We’ll dig into that later on.

Async/await makes lots of previously complex operations really easy. For example, what if we wanted to sequentially retrieve the friends lists for each of the user’s friends?

Here’s how fetching each friend list sequentially might look with normal promises.

function promiseLoops () {

const api = new Api()

api.getUser()

.then((user) => {

return api.getFriends(user.id)

})

.then((returnedFriends) => {

const getFriendsOfFriends = (friends) => {

if (friends.length > 0) {

let friend = friends.pop()

return api.getFriends(friend.id)

.then((moreFriends) => {

console.log('promiseLoops', moreFriends)

return getFriendsOfFriends(friends)

})

}

}

return getFriendsOfFriends(returnedFriends)

})

}We’re creating an inner-function that recursively chains promises for the fetching friends-of-friends until the list is empty. Ugh. It’s completely functional, which is nice, but this is still an exceptionally complicated solution for a fairly straightforward task.

Note - Attempting to simplify the

promiseLoops()function usingPromise.all()will result in a function that behaves in significantly different manner. The intention of this example is to run the operations sequentially (one at a time), whereasPromise.all()is used for running asynchronous operations concurrently (all at once).Promise.all()is still very powerful when combined with async/await, however, as we’ll see in the next section.

This could be so much easier.

async function asyncAwaitLoops () {

const api = new Api()

const user = await api.getUser()

const friends = await api.getFriends(user.id)

for (let friend of friends) {

let moreFriends = await api.getFriends(friend.id)

console.log('asyncAwaitLoops', moreFriends)

}

}No need to write any recursive promise closures. Just a for-loop. Async/await is your friend.

It’s a bit slow to get each additional friend list one-by-one, why not do them in parallel? Can we do that with async/await?

Yeah, of course we can. It solves all of our problems.

async function asyncAwaitLoopsParallel () {

const api = new Api()

const user = await api.getUser()

const friends = await api.getFriends(user.id)

const friendPromises = friends.map(friend => api.getFriends(friend.id))

const moreFriends = await Promise.all(friendPromises)

console.log('asyncAwaitLoopsParallel', moreFriends)

}To run operations in parallel, form an array of promises to be run,

and pass it as the parameter to Promise.all(). This returns

a single promise for us to await, which will resolve once all of the

operations have completed.

There is, however, one major issue in asynchronous programming that we haven’t addressed yet: error handling. The bane of many codebases, asynchronous error handling often involves writing individual error handling callbacks for each operation. Percolating errors to the top of the call stack can be complicated, and normally requires explicitly checking if an error was thrown at the beginning of every callback. This approach is tedious, verbose and error-prone. Furthermore, any exception thrown in a promise will fail silently if not properly caught, leading to “invisible errors” in codebases with incomplete error checking.

Let’s go back through the examples and add error handling to each. To test the error handling, we’ll be calling an additional function, “api.throwError()”, before retrieving the user photo.

Let’s look at a worst-case scenario.

function callbackErrorHell () {

const api = new Api()

let user, friends

api.getUser().then(function (returnedUser) {

user = returnedUser

api.getFriends(user.id).then(function (returnedFriends) {

friends = returnedFriends

api.throwError().then(function () {

console.log('Error was not thrown')

api.getPhoto(user.id).then(function (photo) {

console.log('callbackErrorHell', { user, friends, photo })

}, function (err) {

console.error(err)

})

}, function (err) {

console.error(err)

})

}, function (err) {

console.error(err)

})

}, function (err) {

console.error(err)

})

}This is just awful. Besides being really long and ugly, the control flow is very unintuitive to follow since it flows from the outside in, instead of from top to bottom like normal, readable code. Awful. Let’s move on.

We can improve things a bit by using a combined Promise “catch” method.

function callbackErrorPromiseChain () {

const api = new Api()

let user, friends

api.getUser()

.then((returnedUser) => {

user = returnedUser

return api.getFriends(user.id)

})

.then((returnedFriends) => {

friends = returnedFriends

return api.throwError()

})

.then(() => {

console.log('Error was not thrown')

return api.getPhoto(user.id)

})

.then((photo) => {

console.log('callbackErrorPromiseChain', { user, friends, photo })

})

.catch((err) => {

console.error(err)

})

}This is certainly better; by leveraging a single catch function at the end of the promise chain, we can provide a single error handler for all of the operations. However, it’s still a bit complex, and we are still forced to handle the asynchronous errors using a special callback instead of handling them the same way we would normal Javascript errors.

We can do better.

async function aysncAwaitTryCatch () {

try {

const api = new Api()

const user = await api.getUser()

const friends = await api.getFriends(user.id)

await api.throwError()

console.log('Error was not thrown')

const photo = await api.getPhoto(user.id)

console.log('async/await', { user, friends, photo })

} catch (err) {

console.error(err)

}

}Here, we’ve wrapped the entire operation within a normal try/catch block. This way, we can throw and catch errors from synchronous code and asynchronous code in the exact same way. Much simpler.

I mentioned earlier that any function tagged with “async” actually returns a promise. This allows us to really easily compose asynchronous control flows.

For instance, we can reconfigure the earlier example to return the user data instead of logging it. Then we can retrieve the data by calling the async function as a promise.

async function getUserInfo () {

const api = new Api()

const user = await api.getUser()

const friends = await api.getFriends(user.id)

const photo = await api.getPhoto(user.id)

return { user, friends, photo }

}

function promiseUserInfo () {

getUserInfo().then(({ user, friends, photo }) => {

console.log('promiseUserInfo', { user, friends, photo })

})

}Even better, we can use async/await syntax in the receiver function too, leading to a completely obvious, even trivial, block of asynchronous programing.

async function awaitUserInfo () {

const { user, friends, photo } = await getUserInfo()

console.log('awaitUserInfo', { user, friends, photo })

}What if now we need to retrieve all of the data for the first 10 users?

async function getLotsOfUserData () {

const users = []

while (users.length < 10) {

users.push(await getUserInfo())

}

console.log('getLotsOfUserData', users)

}How about in parallel? And with airtight error handling?

async function getLotsOfUserDataFaster () {

try {

const userPromises = Array(10).fill(getUserInfo())

const users = await Promise.all(userPromises)

console.log('getLotsOfUserDataFaster', users)

} catch (err) {

console.error(err)

}

}With the rise of single-page javascript web apps and the widening adoption of NodeJS, handling concurrency gracefully is more important than ever for Javascript developers. Async/await alleviates many of the bug-inducing control-flow issues that have plagued Javascript codebases for decades and is pretty much guaranteed to make any async code block significantly shorter, simpler, and more self-evident. With near-universal support in mainstream browsers and NodeJS, this is the perfect time to integrate these techniques into your own coding practices and projects.

Krasimir Tsonev

Sometimes when you learn something new you get really excited. Excited to that level so you want to teach it to someone. That is the case with the concept which I found a couple of months ago. It is an implementation of the command pattern using generators. Or the well known saga used in the redux-saga library. In this article we will see how the idea makes our asynchronous code simpler and easy to read. We will also implement it ourself using generators.

If you are lazy and don’t want to ready everything check the banica repo. It is all the stuff that we say here but wrapped in a library.

A generator is an object that conforms iterable

and iterator

protocols. Which means that it is an object that has a

Symbol.iterator key responding to a function returning an

iterator. And the iterator defines a standard way to produce values.

Every string in JavaScript for example has this characteristics. For

example:

const str = 'hello';const iterator = str[Symbol.iterator]();

iterator.next(); // {value: "h", done: false}

iterator.next(); // {value: "e", done: false}

iterator.next(); // {value: "l", done: false}

iterator.next(); // {value: "l", done: false}

iterator.next(); // {value: "o", done: false}

iterator.next(); // {value: undefined, done: true}By calling the iterator’s next method we are asking for

the next value in a sequence of values. It is the same with generators.

Once we initialize a generator we may fetch values from it.

To define a generator we use a special type of function syntax

containing an asterisk before the name of the function and

after the function keyword.

function* createGenerator() { /* ... */ }

const generator = createGenerator();generator.next();

// {value: undefined, done: true}Once we call that function we receive a generator object with an

iterator-ish API. We also have a return method that

completes the generator with a given value and throw which

resumes the generator and throws an error inside.

The most exciting part in the generators is done via the

yield keyword. The yield expression allows us

to pause the function and gives control to the code that runs the

generator. Later when we call next we resume the generator

with an optional input. Here is an example:

function* formatAnswer() {

const answer = yield 'foo';

return `The answer is ${ answer }`;

}

const generator = formatAnswer();

console.log(generator.next()); // {value: "foo", done: false}

console.log(generator.next(42)); // {value: "The answer is 42", done: true}The first next pauses the function just before assigning

a value to the answer constant. We receive foo

as a value and done is false

because the generator is not finished yet. The followed

next call resumes the function with 42 which

gets assigned to answer. And because we have a

return statement the generator is completed and we have

done set to true.

This type of communication between the generator and the code that iterates it is what we will be using for implementing the command pattern. That is what excited me the most because we are able to handle asynchronous processes by writing code that looks synchronous.

The big deal using the command pattern is to split the code that wants to do something from the code that is actually doing it. Let’s take the following example:

const player = function (name) {

return {

moveLeft() {

console.log(`${ name } moved to the left`);

},

moveRight() {

console.log(`${ name } moved to the right`);

},

jump() {

console.log(`${ name } jumped`);

}

}

}

const p = player('Foo');

p.moveLeft(); // Foo moved to the leftp.jump(); // Foo jumpedWe see how the code that wants to make the player jumping is actually

doing it (p.jump() call). That is fine but we may use

another implementation:

const player = function (name) {

const commands = {

moveLeft() {

console.log(`${ name } moved to the left`);

},

moveRight() {

console.log(`${ name } moved to the right`);

},

jump() {

console.log(`${ name } jumped`);

}

}

return {

execute(command) {

commands[command.action]();

}

}

}

const p = player('Foo');

p.execute({ action: 'moveLeft' }); // Foo moved to the left

p.execute({ action: 'jump' }); // Foo jumpedWe see how that new implementation introduces one more level of

abstraction. Now the code that wants to make the player move/jump is not

actually doing it. This helps a lot if we have to change the API of the

player. Like for example if we want to rename

moveLeft to moveBackward and

moveRight to moveForward. We don’t have to

amend all the places which are using these methods but only create an

alias in the execute function. Having such separation also

helps us inject logic before the actual method invocation. And if that

method is an asynchronous operation we may simply handle it at this

level.

Let’s keep the idea of having a player that we need to

move and jump. We also want to provide command objects like

{ action: 'jump' } and someone else handle the actual

work.

function iterateOverTheGenerator(gen, name) {

const status = gen.next();

if (status.done) return;

switch (status.value.action) {

case 'moveLeft': console.log(`${ name } moved to the left`);

break;;

case 'moveRight': console.log(`${ name } moved to the right`);

break;

case 'jump': console.log(`${ name } jumped`);

break;

}

return iterateOverTheGenerator(gen, name);

}

function* createGenerator() {

yield { action: 'moveLeft' };

yield { action: 'jump' };

}

const generator = createGenerator();/*It prints: Foo moved to the left Foo jumped*/

iterateOverTheGenerator(generator, 'Foo');Very often when working with a generator we have a helper that loops

over the produced values. Remember how the generator object is actually

an iterator. What happens when calling next is that the

function pauses at the first yield expression and the

value in the

{ done: <boolean>, value: <something> } object

is what is yielded. In our example this is the command

object. We see what’s the desired action and call again

iterateOverTheGenerator so we could fetch another

instruction. The process continues till we reach the end of the

generator (done is true).

Of course iterateOverTheGenerator is really specific and

it knows a lot about what kind of commands we want to execute. The goal

in this article is to produce a more robust utility that accepts a

generator, iterates over its values and execute functions.

More or less the commands that we want to handle outside of the generator are:

That is pretty much all the different types of function calls that I see in my daily JavaScript work. Let’s start with the simplest one - handle synchronous function call outside of the generator.

First we need a function for creating the command object. We don’t

want to write { action: <something> } all the time so

it will be nice if we have a helper for that.

function call(func, ...args) {

return { type: 'call', func, args };

}

call(mySynchronousFunction, 'foo', 'bar');// { type: 'call', func: <mySynchronousFunction>, args: ['foo', 'bar' ] }For the purpose of this article we may skip the type key

because all we are going to do is calling functions but it is a good

idea to make that process explicit. Later we may decide to extend this

layer and add something different like fetching data from a store or

dispatching an action (if we work in Flux-ish context).

Let’s use the same player concept and say that our main

object has just two methods - moveLeft and

moveRight. They will update an internal variable

position by given steps. We also have a

getPosition which simply returns the value of the

position variable.

const player = function () {

var position = 0;

return {

moveLeft(steps) {

position -= steps;

},

moveRight(steps) {

position += steps;

},

getPosition() {

return position;

}

}

}Now it gets interesting. We have to write a generator function that

uses the call helper to execute the methods of the

player.

function* game(player) {

yield call(player.moveLeft, 2);

yield call(player.moveRight, 1);

const position = yield call(player.getPosition);

console.log(`The position is ${ position }.`);

}We basically say “Move the player two steps to the left and one

step to the right. Then give me the player’s position”. The

game generator itself is doing nothing. That is because we

yield only JavaScript objects. Instructions of what we want

to happen but without doing it. We could easily write the following

equivalent:

function* game(player) {

yield { type: 'call', func: player.moveLeft, args: [2] };

yield { type: 'call', func: player.moveRight, args: [1] };

const position = yield { type: 'call', func: player.getPosition, args: [] };

console.log(`The position is ${ position }.`);

}The next step in our implementation is to build the receiver. The bit which iterates the generator and executes our commands.

function receiver(generator) {

const iterate = function ({ value, done }) {

if (done) return value;

if (value.type === 'call') {

const result = value.func(...value.args);

return iterate(generator.next(result));

}

}

return iterate(generator.next());

}

receiver(game(player()));/*The result in the console is "The position is -1".*/The first thing that we do in the receive is to call

generator.next and pass the result to our internal

iterate function. It will be responsible for recursively

calling next till we complete the generator. It also makes

sure that we resume the generator with the result of the last executed

command. There are four calls of iterate:

done is false and value

contains a moveLeft commanddone is false and value

contains a moveRight commanddone is false and value

contains a getPosition commanddone is true and value is

undefined because we don’t have a return statement in our

game generator.What if we want to save the position in a database via API. Let’s

write a save function in our player which

simulates an async process.

function player() {

var position = 0;

return {

moveLeft(steps) {...},

moveRight(steps) {...},

getPosition() {...},

save() {

return new Promise(resolve => setTimeout(() => resolve('successful'), 1000));

}

}

}When we call save we will receive a promise which gets

resolved a second later. Inside the game generator the

usage of that function will look synchronous but in fact is not:

function* game(player) {

yield call(player.moveLeft, 2);

yield call(player.moveRight, 1);

const position = yield call(player.getPosition);

console.log(`The position is ${ position }.`);

const resultOfSaving = yield call(player.save);

console.log(`Saving is ${ resultOfSaving }.`);

}Our receiver now has to be smart enough to understand

that the result of this particular command is a promise. It should also

wait till that promise is resolved and resume the generator with the

resolved value.

function receiver(generator) {

const iterate = function ({ value, done }) {

if (done) return value;

if (value.type === 'call') {

const result = value.func(...value.args);

if (result && typeof result.then !== 'undefined') { // <-- Oh wait, that's a promise

result.then(resolvedValue => iterate(generator.next(resolvedValue)));

} else {

return iterate(generator.next(result));

}

}

}

return iterate(generator.next());

}We now examine the result of the command and check if it has a

then method. If yes we assume that this is a promise. We

wait till it is resolved and again continue with the same recursion. If

we run the code we will see The position is -1. and then a

second later Saving is successful.. Here we can see the

beauty of this pattern. Because of the pause-resume characteristic of

the generator we are able to handle an asynchronous operation and hide

it behind synchronous code.

Let’s extract the two console logs into a separate generator called

finish:

function* finish(player) {

const position = yield call(player.getPosition);

console.log(`The position is ${ position }.`);

const resultOfSaving = yield call(player.save);

console.log(`Saving is ${ resultOfSaving }.`);

}

function* game(player) {

yield call(player.moveLeft, 2);

yield call(player.moveRight, 1);

yield call(finish, player);

console.log('finish');

}The trivial approach for handing this case is to call the

receiver again with the result of the command. The code

looks like this:

function receiver(generator) {

const iterate = function ({ value, done }) {

if (done) return value;

if (value.type === 'call') {

const result = value.func(...value.args);

if (result && typeof result.then !== 'undefined') {

result.then(resolvedValue => iterate(generator.next(resolvedValue)));

} else if (result && typeof result.next !== 'undefined') { // <-- Oh wait, that's a generator

return iterate(generator.next(receiver(result)));

} else {

return iterate(generator.next(result));

}

}

}

return iterate(generator.next());

}So, if it happens that the result of the command is another generator

we iterate over it again using the same receiver function.

The thing is that the new line

iterate(generator.next(receiver(result))) is actually

synchronous while we may have asynchronous processes in that new

generator. If we run the code above we will see:

The position is -1.

finishSaving is successful.While finish should be displayed at the end. So,

yield call(finish, player) is not blocking the

generator.

We have to be smarter and say “Ok, run the new generator but let

me know when it is completed so I can continue iterating the main

one.”. To satisfy this case we have to make our

receiver a little bit more complicated and assume that it

always works asynchronously.

function receiver(generator) {

return new Promise(generatorCompleted => {

const iterate = function ({ value, done }) {

if (done) {

return generatorCompleted(value);

}

if (value.type === 'call') {

const result = value.func(...value.args);

if (result && typeof result.then !== 'undefined') {

result.then(resolvedValue => iterate(generator.next(resolvedValue)));

} else if (result && typeof result.next !== 'undefined') {

receiver(result).then(resultOfGenerator => {

iterate(generator.next(resultOfGenerator))

});

} else {

return iterate(generator.next(result));

}

}

}

iterate(generator.next());

});

}Now the receiver function returns a promise. It gets

resolved when the generator is completed. If done is

true we simply resolve the promise. Which perfectly cover

our case and helps us asynchronously handle the internal generator.

receiver(result).then(resultOfGenerator => {

iterate(generator.next(resultOfGenerator))

});Instead of using call for chaining with another

generator we could simply yield it like so:

function* game(player) {

yield call(player.moveLeft, 2);

yield call(player.moveRight, 1);

yield * finish(player);

console.log('finish');

}Guess what? We don’t have to change our receiver to make

this work. It just works because when we use yield * we are

delegating a generator. For the code that iterates, the whole thing

looks like a single generator. We just continue calling

next until we pass all the yield statements

(in the main AND delegated generators).

So far everything was working with no issues. But what if some of our commands throws an error. Let’s say that our player can not jump. If someone tries to make it jump we throw an error:

function player() {

var position = 0;

return {

moveLeft(steps) {...},

moveRight(steps) {...},

getPosition() {...},

save() {...},

jump() {

throw new Error(`You ain't jump!`);

}

}

}To handle the error we have to wrap the execution of the command in a try-catch block:

function receiver(generator) {

return new Promise(generatorCompleted => {

const iterate = function ({ value, done }) {

if (done) {

return generatorCompleted(value);

}

if (value.type === 'call') {

try { // calling

value.func(...value.args) // checking for a promise or another generator

} catch(error) {

iterate(generator.throw(error));

}

}

}

iterate(generator.next());

});

}This is the first time where we see generator.throw

method. It resumes the generator by throwing an error inside. It is a

really nice way to say “Hey, I got an error from your command. Here

it is, handle it.”. Together with throwing an error

throw is a little bit like calling next it

moves the generator forward and we got again

{ done: ..., value: ... } object as a result. So, we just

pass it to the iterate function in order to continue the

recursion. Here is how we handle the error in the game

generator function:

function* game(player) {

yield call(player.moveLeft, 2);

yield call(player.moveRight, 1);

try {

yield call(player.jump);

} catch(error) {

console.log(`Ops, ${ error }`);

}

yield call(finish, player);

console.log('finish');

}And the result in the console is:

Ops, Error: You ain't jump!

The position is -1.

Saving is successful.finishThat is nice, we handled a synchronous command error. What if some of our async processes fail? Let’s create another method in our player that again returns a promise but that promise gets rejected:

function player() {

var position = 0;

return {

moveLeft(steps) { position -= steps; },

moveRight(steps) { position += steps; },

getPosition() { return position; },

save() {...},

jump() {...},

cheat() {

return new Promise((resolve, reject) => {

setTimeout(() => reject('sorry'), 1000)

});

}

}

}The receiver now has to be aware of the fact that the

promise may be rejected and should again use throw to send

the error in our game generator. The change that we have to

do is around the code that handles the promise. then method

accepts a second argument which is function fired when the promise is

rejected. We just do the same - continue the iteration by calling

iterate with generator.throw’s result as a

parameter.

if (result && typeof result.then !== 'undefined') {

result.then(resolvedValue =>

iterate(generator.next(resolvedValue)),

error => iterate(generator.throw(error))

);

}In order to catch the error we have to again wrap our

yield call into a try-catch block.

function* game(player) {

yield call(player.moveLeft, 2);

yield call(player.moveRight, 1);

try {

yield call(player.jump);

} catch(error) {

console.log(`Ops, ${ error }`);

} try {

yield call(player.cheat);

} catch (error) {

console.log(`Ops, ${ error }`);

}

yield call(finish, player);

console.log('finish');

}Now the result of the whole thing becomes:

Ops, Error: You ain't jump!

Ops, sorry

The position is -1.

Saving is successful.finishThis is how we handle errors. It first happens in the code that

iterates (the receiver) and then the errors are passed down

to the generator.

Here is the final code of our receiver:

function receiver(generator) {

return new Promise(generatorCompleted => {

const iterate = function ({ value, done }) {

if (done) { return generatorCompleted(value); }

if (value.type === 'call') {

try {

const result = value.func(...value.args);

if (result && typeof result.then !== 'undefined') {

result.then( resolvedValue =>

iterate(generator.next(resolvedValue)),

error => iterate(generator.throw(error))

);

} else if (result && typeof result.next !== 'undefined') {

receiver(result).then(resultOfGenerator => {

iterate(generator.next(resultOfGenerator))

});

} else {

return iterate(generator.next(result));

}

} catch(error) {

iterate(generator.throw(error));

}

}

}

iterate(generator.next());

});

}And here is a CodePen to play with it:

See the Pen Implementation of the command pattern using generators by Krasimir Tsonev (@krasimir) on CodePen.

I learned this pattern from the redux-saga project. You will see a

similar call helper there but the library is Redux

specific. So I decided to extract the code above into a npm module. Here

is the same example but using banica library.

import { run, call } from 'banica';

function player() {

var position = 0;

return {

moveLeft(steps) { ... },

moveRight(steps) { ... },

getPosition() { ... },

save() { ... },

jump() { ... },

cheat() { ... }

}

}

function* finish(player) {

const position = yield call(player.getPosition);

console.log(`The position is ${ position }.`);

const resultOfSaving = yield call(player.save);

console.log(`Saving is ${ resultOfSaving }.`);

}

function* game(player) {

yield call(player.moveLeft, 2);

yield call(player.moveRight, 1);

try {

yield call(player.jump);

} catch(error) {

console.log(`Ops, ${ error }`);

} try {

yield call(player.cheat);

} catch (error) {

console.log(`Ops, ${ error }`);

}

yield call(finish, player);

console.log('finish');

}

run(game(player()));(Why I call it “banica”? Well, that’s one of my favorite Bulgarian dishes. More about it here).

This type of command pattern implementation together with the idea of the state machines are game changers for me this year. I hope you enjoy this article and I made you experiment more with generators. And why not try redux-saga or banica libraries.

Peleke Sengstacke | April 11, 2016

With the ES2015 spec finalized and Node.js shipping with a substantial subset of its functionailty, it’s safe to say it: The Future is Upon Us.

…I’ve always wanted to say that.

But, it’s true. The V8 Engine is swiftly approaching spec-compliance, and Node ships with a good selection of ES2015 features ready for production. It’s this latter list of features that I consider the Essentials™, as it represents the set of feature we can use without a transpiler like Babel or Traceur.

This article will cover three of the more popular ES2015 features available in Node:

let and const;Let’s get to it.

let and constScope refers to where in your program your variables are visible. In other words, it’s the set of rules that determines where you’re allowed to use the variables you’ve declared.